Partnerships

Mastering Multimodal AI: Advanced Video Understanding with Twelve Labs + Databricks Mosaic AI

James Le

This article guides developers through integrating Twelve Labs' Embed API with Databricks Mosaic AI Vector Search to create advanced video understanding applications, including similarity search and recommendation systems, while addressing performance optimization, scaling, and monitoring considerations.

This article guides developers through integrating Twelve Labs' Embed API with Databricks Mosaic AI Vector Search to create advanced video understanding applications, including similarity search and recommendation systems, while addressing performance optimization, scaling, and monitoring considerations.

In this article

Join our newsletter

Join our newsletter

Receive the latest advancements, tutorials, and industry insights in video understanding

Receive the latest advancements, tutorials, and industry insights in video understanding

Search, analyze, and explore your videos with AI.

Aug 30, 2024

14 Min

Copy link to article

Short Summary

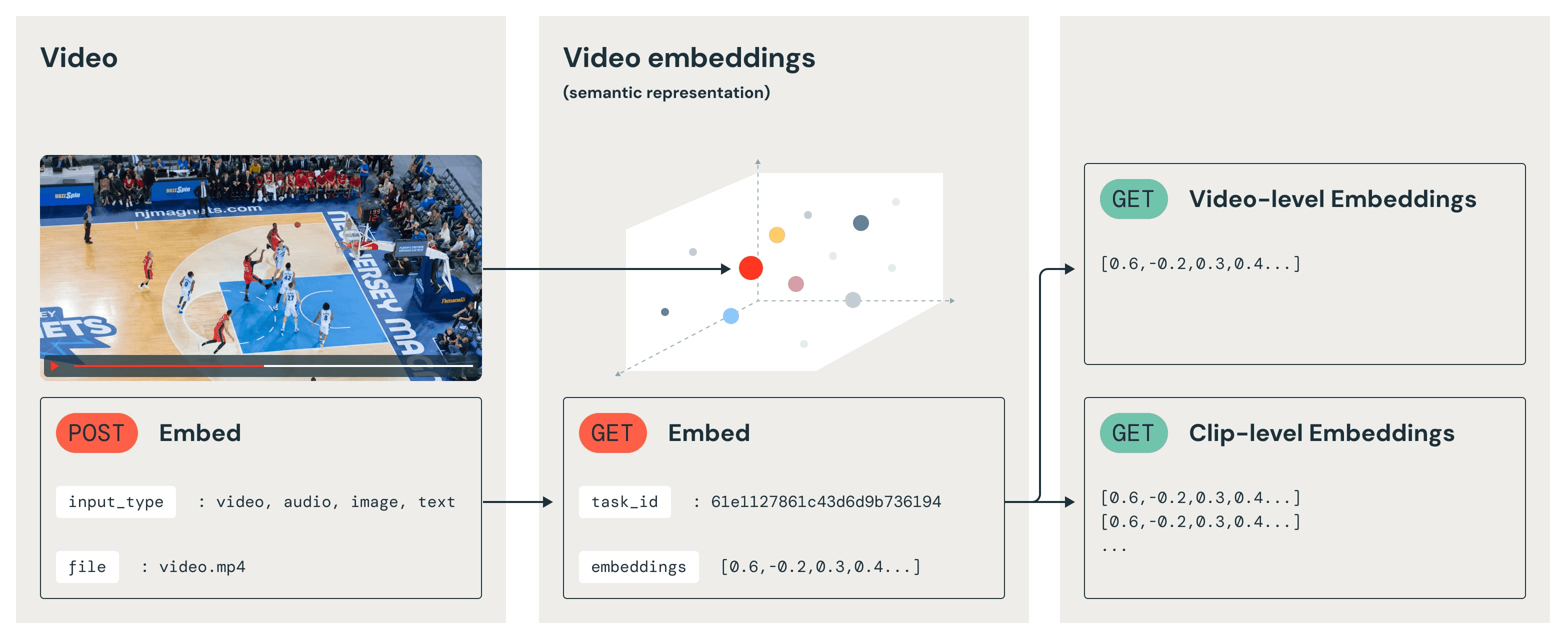

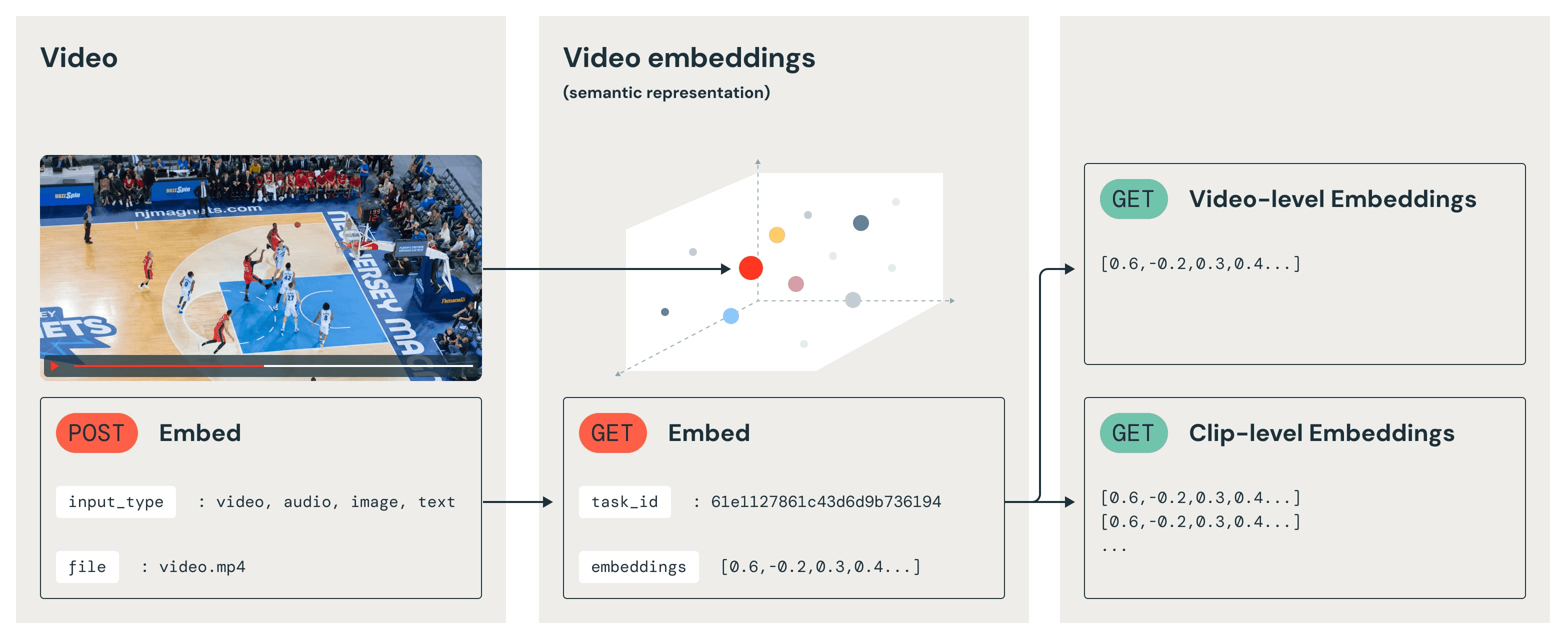

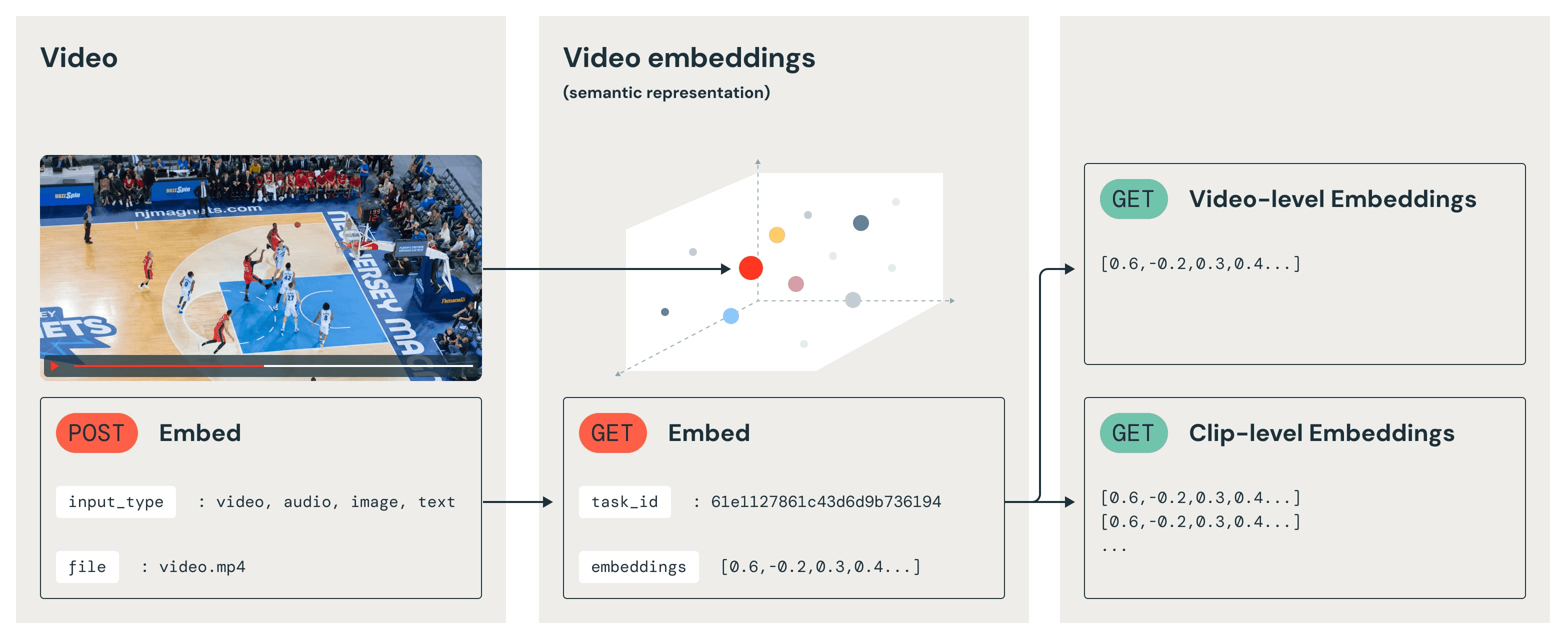

Twelve Labs Embed API enables developers to get multimodal embeddings that power advanced video understanding use cases, from semantic video search and data curation to content recommendation and video RAG systems.

With Twelve Labs, contextual vector representations can be generated that capture the relationship between visual expressions, body language, spoken words, and overall context within videos. Databricks Mosaic AI Vector Search provides a robust, scalable infrastructure for indexing and querying high-dimensional vectors.

This blog post will guide you through harnessing these complementary technologies to unlock new possibilities in video AI applications.

Big thanks to Nina Williams, Austin Zaccor, Fernanda Heredia, and Emily Hutson from Databricks for collaborating with us on this tutorial!

Why Twelve Labs + Databricks Mosaic AI?

Integrating Twelve Labs Embed API with Databricks Mosaic AI Vector Search addresses key challenges in video AI, such as efficient processing of large-scale video datasets and accurate multimodal content representation. This integration reduces development time and resource needs for advanced video applications, enabling complex queries across vast video libraries and enhancing overall workflow efficiency.

The unified approach to handling multimodal data is particularly noteworthy. Instead of juggling separate models for text, image, and audio analysis, users can now work with a single, coherent representation that captures the essence of video content in its entirety. This not only simplifies deployment architecture but also enables more nuanced and context-aware applications, from sophisticated content recommendation systems to advanced video search engines and automated content moderation tools.

Moreover, this integration extends the capabilities of the Databricks ecosystem, allowing seamless incorporation of video understanding into existing data pipelines and machine learning workflows. Whether companies are developing real-time video analytics, building large-scale content classification systems, or exploring novel applications in Generative AI, this combined solution provides a powerful foundation. It pushes the boundaries of what's possible in video AI, opening up new avenues for innovation and problem-solving in industries ranging from media and entertainment to security and healthcare.

Understanding Twelve Labs' Embed API

Twelve Labs' Embed API represents a significant advancement in multimodal embedding technology, specifically designed for video content. Unlike traditional approaches that rely on frame-by-frame analysis or separate models for different modalities, this API generates contextual vector representations that capture the intricate interplay of visual expressions, body language, spoken words, and overall context within videos. It is powered by our state-of-the-art multimodal foundation model Marengo-2.6.

The Embed API offers several key features that make it particularly powerful for AI engineers working with video data. First, it provides flexibility for any modality present in videos, eliminating the need for separate text-only or image-only models. Second, it employs a video-native approach that accounts for motion, action, and temporal information, ensuring a more accurate and temporally coherent interpretation of video content. Lastly, it creates a unified vector space that integrates embeddings from all modalities, facilitating a more holistic understanding of the video content.

For AI engineers, the Embed API opens up new possibilities in video understanding tasks. It enables more sophisticated content analysis, improved semantic search capabilities, and enhanced recommendation systems. The API's ability to capture subtle cues and interactions between different modalities over time makes it particularly valuable for applications requiring a nuanced understanding of video content, such as emotion recognition, context-aware content moderation, and advanced video retrieval systems.

Prerequisites

Before integrating Twelve Labs Embed API with Databricks Mosaic AI Vector Search, be sure you have the following prerequisites:

A Databricks account with access to create and manage workspaces. (You can sign up for a free trial at https://databricks.com/try-databricks)

Familiarity with Python programming and basic data science concepts.

A Twelve Labs API key. (Sign up at playground.twelvelabs.io)

Basic understanding of vector embeddings and similarity search concepts.

(Optional) An AWS account if using Databricks on AWS. This is not required if using Databricks on Azure or Google Cloud.

Note: The Embed API is currently in private beta but any user can request access by simply filling this form. Usually within a few hours, you will receive a confirmation email that you can now start using the Embed API.

Step 1: Set Up the Environment

To begin, set up the Databricks environment and install the necessary libraries:

1 - Create a new Databricks workspace:

Log in to your Databricks account at https://accounts.cloud.databricks.com/

Follow the steps outlined in the Databricks documentation to create a new workspace: https://docs.databricks.com/en/getting-started/index.html

2 - Create a new cluster or connect to an existing cluster:

Almost any ML cluster will work for this application. The below settings are provided for those seeking optimal price performance.

In your Compute tab, click “Create compute”

Select “Single node” and Runtime: 14.3 LTS ML non-GPU

The cluster policy and access mode can be left as the default

Select “r6i.xlarge” as the Node type

This will maximize memory utilization while only costing $0.252/hr on AWS and 1.02 DBU/hr on Databricks before any discounting

It was also one of the fastest options we tested

All other options can be left as the default

Click “Create compute” at the bottom and return to your workspace

3 - Create a new notebook in your Databricks workspace:

In your workspace, click "Create" and select "Notebook"

Name your notebook (e.g., "TwelveLabs_MosaicAI_VectorSearch_Integration")

Choose Python as the default language

4 - Install the Twelve Labs and Mosaic AI Vector Search SDKs:

In the first cell of your notebook, run the following command:

%pip install twelvelabs databricks-vectorsearch

5 - Set up Twelve Labs authentication:

In the next cell, add the following code:

from twelvelabs import TwelveLabs import os # Retrieve the API key from Databricks secrets (recommended) # You'll need to set up the secret scope and add your API key first TWELVE_LABS_API_KEY = dbutils.secrets.get(scope="your-scope", key="twelvelabs-api-key") if TWELVE_LABS_API_KEY is None: raise ValueError("TWELVE_LABS_API_KEY environment variable is not set") # Initialize the Twelve Labs client twelvelabs_client = TwelveLabs(api_key=TWELVE_LABS_API_KEY)

Note: For enhanced security, it's recommended to use Databricks secrets to store your API key rather than hardcoding it or using environment variables.

Step 2: Generate Multimodal Embeddings

Use the provided generate_embedding function to generate multimodal embeddings using Twelve Labs Embed API. This function is designed as a Pandas user-defined function (UDF) to work efficiently with Spark DataFrames in Databricks. It encapsulates the process of creating an embedding task, monitoring its progress, and retrieving the results.

Next, create a process_url function, which takes the video URL as string input and invokes a wrapper call to the Twelve Labs Embed API - returning an array<float>.

Here's how to implement and use it:

Define the UDF:

from pyspark.sql.functions import pandas_udf from pyspark.sql.types import ArrayType, FloatType from twelvelabs.models.embed import EmbeddingsTask import pandas as pd @pandas_udf(ArrayType(FloatType())) def get_video_embeddings(urls: pd.Series) -> pd.Series: def generate_embedding(video_url): twelvelabs_client = TwelveLabs(api_key=TWELVE_LABS_API_KEY) task = twelvelabs_client.embed.task.create( engine_name="Marengo-retrieval-2.6", video_url=video_url ) task.wait_for_done() task_result = twelvelabs_client.embed.task.retrieve(task.id) embeddings = [] for v in task_result.video_embeddings: embeddings.append({ 'embedding': v.embedding.float, 'start_offset_sec': v.start_offset_sec, 'end_offset_sec': v.end_offset_sec, 'embedding_scope': v.embedding_scope }) return embeddings def process_url(url): embeddings = generate_embedding(url) return embeddings[0]['embedding'] if embeddings else None return urls.apply(process_url)

Create a sample DataFrame with video URLs:

video_urls = [ "https://example.com/video1.mp4", "https://example.com/video2.mp4", "https://example.com/video3.mp4" ] df = spark.createDataFrame([(url,) for url in video_urls], ["video_url"])

Apply the UDF to generate embeddings:

df_with_embeddings = df.withColumn("embedding", get_video_embeddings(df.video_url))

Display the results:

df_with_embeddings.show(truncate=False)

This process will generate multimodal embeddings for each video URL in a DataFrame that will capture the multimodal essence of the video content, including visual, audio, and textual information.

Remember that generating embeddings can be computationally intensive and time-consuming for large video datasets. Consider implementing batching or distributed processing strategies for production-scale applications. Additionally, ensure that you have appropriate error handling and logging in place to manage potential API failures or network issues.

Step 3: Create a Delta Table for Video Embeddings

Now, create a source Delta Table to store video metadata and the embeddings generated by Twelve Labs Embed API. This table will serve as the foundation for a Vector Search index in Databricks Mosaic AI Vector Search.

First, create a source DataFrame with video URLs and metadata:

from pyspark.sql import Row # Create a list of sample video URLs and metadata video_data = [ Row(url='http://commondatastorage.googleapis.com/gtv-videos-bucket/sample/ElephantsDream.mp4', title='Elephant Dream'), Row(url='http://commondatastorage.googleapis.com/gtv-videos-bucket/sample/Sintel.mp4', title='Sintel'), Row(url='http://commondatastorage.googleapis.com/gtv-videos-bucket/sample/BigBuckBunny.mp4', title='Big Buck Bunny') ] # Create a DataFrame from the list source_df = spark.createDataFrame(video_data) source_df.show()

Next, declare the schema for the Delta table using SQL:

%sql CREATE TABLE IF NOT EXISTS videos_source_embeddings ( id BIGINT GENERATED BY DEFAULT AS IDENTITY, url STRING, title STRING, embedding ARRAY<FLOAT> ) TBLPROPERTIES (delta.enableChangeDataFeed = true)

Note that Change Data Feed has been enabled on the table, which is crucial for creating and maintaining the Vector Search index.

Now, generate embeddings for your videos using the get_video_embeddings function defined earlier:

embeddings_df = source_df.withColumn("embedding", get_video_embeddings("url"))

This step may take some time, depending on the number and length of your videos.

With your embeddings generated, now you can write the data to your Delta Table:

embeddings_df.write.mode("append").saveAsTable("videos_source_embeddings")

Finally, verify your data by displaying the DataFrame with embeddings:

display(embeddings_df)

This step creates a robust foundation for Vector Search capabilities. The Delta Table will automatically stay in sync with the Vector Search index, ensuring that any updates or additions to our video dataset are reflected in your search results.

Some key points to remember:

The

idcolumn is auto-generated, providing a unique identifier for each video.The

embeddingcolumn stores the high-dimensional vector representation of each video, generated by Twelve Labs Embed API.Enabling Change Data Feed allows Databricks to efficiently track changes in the table, which is crucial for maintaining an up-to-date Vector Search index.

Step 4: Configure Mosaic AI Vector Search

In this step, set up Databricks Mosaic AI Vector Search to work with video embeddings. This involves creating a Vector Search endpoint and a Delta Sync Index that will automatically stay in sync with your videos_source_embeddings Delta table.

First, create a Vector Search endpoint:

from databricks.vector_search.client import VectorSearchClient # Initialize the Vector Search client and name the endpoint mosaic_client = VectorSearchClient() endpoint_name = "twelve_labs_video_endpoint" # Delete the existing endpoint if it exists try: mosaic_client.delete_endpoint(endpoint_name) print(f"Deleted existing endpoint: {endpoint_name}") except Exception: pass # Ignore non-existing endpoints # Create the new endpoint endpoint = mosaic_client.create_endpoint( name=endpoint_name, endpoint_type="STANDARD" )

This code creates a new Vector Search endpoint or replaces an existing one with the same name. The endpoint will serve as the access point for your Vector Search operations.

Next, create a Delta Sync Index that will automatically stay in sync with your videos_source_embeddings Delta table:

# Define the source table name and index name source_table_name = "twelvelabs.default.videos_source_embeddings" index_name = "twelvelabs.default.video_embeddings_index" index = mosaic_client.create_delta_sync_index( endpoint_name="twelve_labs_video_endpoint", source_table_name=source_table_name, index_name=index_name, primary_key="id", embedding_dimension=1024, embedding_vector_column="embedding", pipeline_type="TRIGGERED" ) print(f"Created index: {index.name}")

This code creates a Delta Sync Index that links to your source Delta table. If you want the index to automatically update within seconds of changes made to the source table (ensuring your Vector Search results are always up-to-date), then set pipeline_type="CONTINUOUS".

To verify that the index has been created and is syncing correctly, use the following code to trigger the sync:

# Check the status of the index; this may take some time index_status = mosaic_client.get_index( endpoint_name="twelve_labs_video_endpoint", index_name="twelvelabs.default.video_embeddings_index" ) print(f"Index status: {index_status}") # Manually trigger the index sync try: index.sync() print("Index sync triggered successfully.") except Exception as e: print(f"Error triggering index sync: {str(e)}")

This code allows you to check the status of your index and manually trigger a sync if needed. In production, you may prefer to set the pipeline to sync automatically based on changes to the source Delta table.

Key points to remember:

The Vector Search endpoint serves as the access point for Vector Search operations.

The Delta Sync Index automatically stays in sync with the source Delta table, ensuring up-to-date search results.

The

embedding_dimensionshould match the dimension of the embeddings generated by Twelve Labs' Embed API (1024).The

primary_keyis set to "id", which should correspond to the unique identifier in our source table.The

embedding_vector_columnis set to "embedding," which should match the column name in our source table containing the video embeddings.

Step 5: Implementing Similarity Search

The next step is to implement similarity search functionality using your configured Mosaic AI Vector Search index and Twelve Labs Embed API. This will allow you to find videos similar to a given text query by leveraging the power of multimodal embeddings.

First, define a function to get the embedding for a text query using Twelve Labs Embed API:

def get_text_embedding(text_query): # Twelve Labs Embed API supports text-to-embedding text_embedding = twelvelabs_client.embed.create( engine_name="Marengo-retrieval-2.6", text=text_query, text_truncate="start" ) return text_embedding.text_embedding.float

This function takes a text query and returns its embedding using the same model as video embeddings, ensuring compatibility in the vector space.

Next, implement the similarity search function:

def similarity_search(query_text, num_results=5): # Initialize the Vector Search client and get the query embedding mosaic_client = VectorSearchClient() query_embedding = get_text_embedding(query_text) print(f"Query embedding generated: {len(query_embedding)} dimensions") # Perform the similarity search results = index.similarity_search( query_vector=query_embedding, num_results=num_results, columns=["id", "url", "title"] ) return results

This function takes a text query and the number of results to return. It generates an embedding for the query, and then uses the Mosaic AI Vector Search index to find similar videos.

To parse and display the search results, use the following helper function:

def parse_search_results(raw_results): try: data_array = raw_results['result']['data_array'] columns = [col['name'] for col in raw_results['manifest']['columns']] return [dict(zip(columns, row)) for row in data_array] except KeyError: print("Unexpected result format:", raw_results) return []

Now, put it all together and perform a sample search:

# Example usage query = "A dragon" raw_results = similarity_search(query) # Parse and print the search results search_results = parse_search_results(raw_results) if search_results: print(f"Top {len(search_results)} videos similar to the query: '{query}'") for i, result in enumerate(search_results, 1): print(f"{i}. Title: {result.get('title', 'N/A')}, URL: {result.get('url', 'N/A')}, Similarity Score: {result.get('score', 'N/A')}") else: print("No valid search results returned.")

This code demonstrates how to use Twelve Labs’ similarity search function to find videos related to the query "A dragon". It then parses and displays the results in a user-friendly format.

Key points to remember:

The

get_text_embeddingfunction uses the same Twelve Labs model as our video embeddings, ensuring compatibility.The

similarity_searchfunction combines text-to-embedding conversion with Vector Search to find similar videos.Error handling is crucial, as network issues or API changes could affect the search process.

The

parse_search_resultsfunction helps convert the raw API response into a more usable format.You can adjust the

num_resultsparameter in thesimilarity_searchfunction to control the number of results returned.

This implementation enables powerful semantic search capabilities across your video dataset. Users can now find relevant videos using natural language queries, leveraging the rich multimodal embeddings generated by Twelve Labs Embed API.

Step 6: Build a Video Recommendation System

Now, it’s time to create a basic video recommendation system using the multimodal embeddings generated by Twelve Labs Embed API and Databricks Mosaic AI Vector Search. This system will suggest videos similar to a given video based on their embedding similarities.

First, implement a simple recommendation function:

def get_video_recommendations(video_id, num_recommendations=5): # Initialize the Vector Search client mosaic_client = VectorSearchClient() # First, retrieve the embedding for the given video_id source_df = spark.table("videos_source_embeddings") video_embedding = source_df.filter(f"id = {video_id}").select("embedding").first() if not video_embedding: print(f"No video found with id: {video_id}") return [] # Perform similarity search using the video's embedding try: results = index.similarity_search( query_vector=video_embedding["embedding"], num_results=num_recommendations + 1, # +1 to account for the input video columns=["id", "url", "title"] ) # Parse the results recommendations = parse_search_results(results) # Remove the input video from recommendations if present recommendations = [r for r in recommendations if r.get('id') != video_id] return recommendations[:num_recommendations] except Exception as e: print(f"Error during recommendation: {e}") return [] # Helper function to display recommendations def display_recommendations(recommendations): if recommendations: print(f"Top {len(recommendations)} recommended videos:") for i, video in enumerate(recommendations, 1): print(f"{i}. Title: {video.get('title', 'N/A')}") print(f" URL: {video.get('url', 'N/A')}") print(f" Similarity Score: {video.get('score', 'N/A')}") print() else: print("No recommendations found.") # Example usage video_id = 1 # Assuming this is a valid video ID in your dataset recommendations = get_video_recommendations(video_id) display_recommendations(recommendations)

This implementation does the following:

The

get_video_recommendationsfunction takes a video ID and the number of recommendations to return.It retrieves the embedding for the given video from a source Delta table.

Using this embedding, it performs a similarity search to find the most similar videos.

The function removes the input video from the results (if present) to avoid recommending the same video.

The

display_recommendationshelper function formats and prints the recommendations in a user-friendly manner.

To use this recommendation system:

Ensure you have videos in your

videos_source_embeddingstable with valid embeddings.Call the

get_video_recommendationsfunction with a valid video ID from your dataset.The function will return and display a list of recommended videos based on similarity.

This basic recommendation system demonstrates how to leverage multimodal embeddings for content-based video recommendations. It can be extended and improved in several ways:

Incorporate user preferences and viewing history for personalized recommendations.

Implement diversity mechanisms to ensure varied recommendations.

Add filters based on video metadata (e.g., genre, length, upload date).

Implement caching mechanisms for frequently requested recommendations to improve performance.

Remember that the quality of recommendations depends on the size and diversity of your video dataset, as well as the accuracy of the embeddings generated by Twelve Labs Embed API. As you add more videos to your system, the recommendations should become more relevant and diverse.

Take this Integration to the Next Level

Update and Sync the Index

As your video library grows and evolves, it's crucial to keep your Vector Search index up-to-date. Mosaic AI Vector Search offers seamless synchronization with your source Delta table, ensuring that recommendations and search results always reflect the latest data.

Key considerations for index updates and synchronization:

Incremental updates: Leverage Delta Lake's change data feed to efficiently update only the modified or new records in your index.

Scheduled syncs: Implement regular synchronization jobs using Databricks workflow orchestration tools to maintain index freshness.

Real-time updates: For time-sensitive applications, consider implementing near real-time index updates using Databricks Mosaic AI streaming capabilities.

Version management: Utilize Delta Lake's time travel feature to maintain multiple versions of your index, allowing for easy rollbacks if needed.

Monitoring sync status: Implement logging and alerting mechanisms to track successful syncs and quickly identify any issues in the update process.

By mastering these techniques, you'll ensure that your Twelve Labs video embeddings are always current and readily available for advanced search and recommendation use cases.

Optimize Performance and Scaling

As your video analysis pipeline grows, it is important to continue optimizing performance and scaling your solution. Distributed computing capabilities from Databricks, combined with efficient embedding generation from Twelve Labs, provide a robust foundation for handling large-scale video processing tasks.

Consider these strategies for optimizing and scaling your solution:

Distributed processing: Leverage Databricks Spark clusters to parallelize embedding generation and indexing tasks across multiple nodes.

Caching strategies: Implement intelligent caching mechanisms for frequently accessed embeddings to reduce API calls and improve response times.

Batch processing: For large video libraries, implement batch processing workflows to generate embeddings and update indexes during off-peak hours.

Query optimization: Fine-tune Vector Search queries by adjusting parameters like

num_resultsand implementing efficient filtering techniques.Index partitioning: For massive datasets, explore index partitioning strategies to improve query performance and enable more granular updates.

Auto-scaling: Utilize Databricks auto-scaling features to dynamically adjust computational resources based on workload demands.

Edge computing: For latency-sensitive applications, consider deploying lightweight versions of your models closer to the data source.

By implementing these optimization techniques, you'll be well-equipped to handle growing video libraries and increasing user demands while maintaining high performance and cost efficiency.

Monitoring and Analytics

Implementing robust monitoring and analytics is essential to ensuring the ongoing success of your video understanding pipeline. Databricks provides powerful tools for tracking system performance, user engagement, and business impact.

Key areas to focus on for monitoring and analytics:

Performance metrics: Track key performance indicators such as query latency, embedding generation time, and index update duration.

Usage analytics: Monitor user interactions, popular search queries, and frequently recommended videos to gain insights into user behavior.

Quality assessment: Implement feedback loops to evaluate the relevance of search results and recommendations, using both automated metrics and user feedback.

Resource utilization: Keep an eye on computational resource usage, API call volumes, and storage consumption to optimize costs and performance.

Error tracking: Set up comprehensive error logging and alerting to quickly identify and resolve issues in the pipeline.

A/B testing: Utilize experimentation capabilities from Databricks to test different embedding models, search algorithms, or recommendation strategies.

Business impact analysis: Correlate video understanding capabilities with key business metrics like user engagement, content consumption, or conversion rates.

Compliance monitoring: Ensure your video processing pipeline adheres to data privacy regulations and content moderation guidelines.

By implementing a comprehensive monitoring and analytics strategy, you'll gain valuable insights into your video understanding pipeline's performance and impact. This data-driven approach will enable continuous improvement and help you demonstrate the value of integrating advanced video understanding capabilities from Twelve Labs with the Databricks Data Intelligence Platform.

Conclusion

Twelve Labs and Databricks Mosaic AI provide a robust framework for advanced video understanding and analysis. This integration leverages multimodal embeddings and efficient Vector Search capabilities, enabling developers to construct sophisticated video search, recommendation, and analysis systems.

This tutorial has walked through the technical steps of setting up the environment, generating embeddings, configuring Vector Search, and implementing basic search and recommendation functionalities. It also addresses key considerations for scaling, optimizing, and monitoring your solution.

In the evolving landscape of video content, the ability to extract precise insights from this medium is critical. This integration equips developers with the tools to address complex video understanding tasks. We encourage you to explore the technical capabilities, experiment with advanced use cases, and contribute to the community of AI engineers advancing video understanding technology.

Additional Resources

To further explore and leverage this integration, consider the following resources:

These resources will help you stay at the forefront of video AI technology and continue to build innovative solutions using Twelve Labs and Databricks.

Short Summary

Twelve Labs Embed API enables developers to get multimodal embeddings that power advanced video understanding use cases, from semantic video search and data curation to content recommendation and video RAG systems.

With Twelve Labs, contextual vector representations can be generated that capture the relationship between visual expressions, body language, spoken words, and overall context within videos. Databricks Mosaic AI Vector Search provides a robust, scalable infrastructure for indexing and querying high-dimensional vectors.

This blog post will guide you through harnessing these complementary technologies to unlock new possibilities in video AI applications.

Big thanks to Nina Williams, Austin Zaccor, Fernanda Heredia, and Emily Hutson from Databricks for collaborating with us on this tutorial!

Why Twelve Labs + Databricks Mosaic AI?

Integrating Twelve Labs Embed API with Databricks Mosaic AI Vector Search addresses key challenges in video AI, such as efficient processing of large-scale video datasets and accurate multimodal content representation. This integration reduces development time and resource needs for advanced video applications, enabling complex queries across vast video libraries and enhancing overall workflow efficiency.

The unified approach to handling multimodal data is particularly noteworthy. Instead of juggling separate models for text, image, and audio analysis, users can now work with a single, coherent representation that captures the essence of video content in its entirety. This not only simplifies deployment architecture but also enables more nuanced and context-aware applications, from sophisticated content recommendation systems to advanced video search engines and automated content moderation tools.

Moreover, this integration extends the capabilities of the Databricks ecosystem, allowing seamless incorporation of video understanding into existing data pipelines and machine learning workflows. Whether companies are developing real-time video analytics, building large-scale content classification systems, or exploring novel applications in Generative AI, this combined solution provides a powerful foundation. It pushes the boundaries of what's possible in video AI, opening up new avenues for innovation and problem-solving in industries ranging from media and entertainment to security and healthcare.

Understanding Twelve Labs' Embed API

Twelve Labs' Embed API represents a significant advancement in multimodal embedding technology, specifically designed for video content. Unlike traditional approaches that rely on frame-by-frame analysis or separate models for different modalities, this API generates contextual vector representations that capture the intricate interplay of visual expressions, body language, spoken words, and overall context within videos. It is powered by our state-of-the-art multimodal foundation model Marengo-2.6.

The Embed API offers several key features that make it particularly powerful for AI engineers working with video data. First, it provides flexibility for any modality present in videos, eliminating the need for separate text-only or image-only models. Second, it employs a video-native approach that accounts for motion, action, and temporal information, ensuring a more accurate and temporally coherent interpretation of video content. Lastly, it creates a unified vector space that integrates embeddings from all modalities, facilitating a more holistic understanding of the video content.

For AI engineers, the Embed API opens up new possibilities in video understanding tasks. It enables more sophisticated content analysis, improved semantic search capabilities, and enhanced recommendation systems. The API's ability to capture subtle cues and interactions between different modalities over time makes it particularly valuable for applications requiring a nuanced understanding of video content, such as emotion recognition, context-aware content moderation, and advanced video retrieval systems.

Prerequisites

Before integrating Twelve Labs Embed API with Databricks Mosaic AI Vector Search, be sure you have the following prerequisites:

A Databricks account with access to create and manage workspaces. (You can sign up for a free trial at https://databricks.com/try-databricks)

Familiarity with Python programming and basic data science concepts.

A Twelve Labs API key. (Sign up at playground.twelvelabs.io)

Basic understanding of vector embeddings and similarity search concepts.

(Optional) An AWS account if using Databricks on AWS. This is not required if using Databricks on Azure or Google Cloud.

Note: The Embed API is currently in private beta but any user can request access by simply filling this form. Usually within a few hours, you will receive a confirmation email that you can now start using the Embed API.

Step 1: Set Up the Environment

To begin, set up the Databricks environment and install the necessary libraries:

1 - Create a new Databricks workspace:

Log in to your Databricks account at https://accounts.cloud.databricks.com/

Follow the steps outlined in the Databricks documentation to create a new workspace: https://docs.databricks.com/en/getting-started/index.html

2 - Create a new cluster or connect to an existing cluster:

Almost any ML cluster will work for this application. The below settings are provided for those seeking optimal price performance.

In your Compute tab, click “Create compute”

Select “Single node” and Runtime: 14.3 LTS ML non-GPU

The cluster policy and access mode can be left as the default

Select “r6i.xlarge” as the Node type

This will maximize memory utilization while only costing $0.252/hr on AWS and 1.02 DBU/hr on Databricks before any discounting

It was also one of the fastest options we tested

All other options can be left as the default

Click “Create compute” at the bottom and return to your workspace

3 - Create a new notebook in your Databricks workspace:

In your workspace, click "Create" and select "Notebook"

Name your notebook (e.g., "TwelveLabs_MosaicAI_VectorSearch_Integration")

Choose Python as the default language

4 - Install the Twelve Labs and Mosaic AI Vector Search SDKs:

In the first cell of your notebook, run the following command:

%pip install twelvelabs databricks-vectorsearch

5 - Set up Twelve Labs authentication:

In the next cell, add the following code:

from twelvelabs import TwelveLabs import os # Retrieve the API key from Databricks secrets (recommended) # You'll need to set up the secret scope and add your API key first TWELVE_LABS_API_KEY = dbutils.secrets.get(scope="your-scope", key="twelvelabs-api-key") if TWELVE_LABS_API_KEY is None: raise ValueError("TWELVE_LABS_API_KEY environment variable is not set") # Initialize the Twelve Labs client twelvelabs_client = TwelveLabs(api_key=TWELVE_LABS_API_KEY)

Note: For enhanced security, it's recommended to use Databricks secrets to store your API key rather than hardcoding it or using environment variables.

Step 2: Generate Multimodal Embeddings

Use the provided generate_embedding function to generate multimodal embeddings using Twelve Labs Embed API. This function is designed as a Pandas user-defined function (UDF) to work efficiently with Spark DataFrames in Databricks. It encapsulates the process of creating an embedding task, monitoring its progress, and retrieving the results.

Next, create a process_url function, which takes the video URL as string input and invokes a wrapper call to the Twelve Labs Embed API - returning an array<float>.

Here's how to implement and use it:

Define the UDF:

from pyspark.sql.functions import pandas_udf from pyspark.sql.types import ArrayType, FloatType from twelvelabs.models.embed import EmbeddingsTask import pandas as pd @pandas_udf(ArrayType(FloatType())) def get_video_embeddings(urls: pd.Series) -> pd.Series: def generate_embedding(video_url): twelvelabs_client = TwelveLabs(api_key=TWELVE_LABS_API_KEY) task = twelvelabs_client.embed.task.create( engine_name="Marengo-retrieval-2.6", video_url=video_url ) task.wait_for_done() task_result = twelvelabs_client.embed.task.retrieve(task.id) embeddings = [] for v in task_result.video_embeddings: embeddings.append({ 'embedding': v.embedding.float, 'start_offset_sec': v.start_offset_sec, 'end_offset_sec': v.end_offset_sec, 'embedding_scope': v.embedding_scope }) return embeddings def process_url(url): embeddings = generate_embedding(url) return embeddings[0]['embedding'] if embeddings else None return urls.apply(process_url)

Create a sample DataFrame with video URLs:

video_urls = [ "https://example.com/video1.mp4", "https://example.com/video2.mp4", "https://example.com/video3.mp4" ] df = spark.createDataFrame([(url,) for url in video_urls], ["video_url"])

Apply the UDF to generate embeddings:

df_with_embeddings = df.withColumn("embedding", get_video_embeddings(df.video_url))

Display the results:

df_with_embeddings.show(truncate=False)

This process will generate multimodal embeddings for each video URL in a DataFrame that will capture the multimodal essence of the video content, including visual, audio, and textual information.

Remember that generating embeddings can be computationally intensive and time-consuming for large video datasets. Consider implementing batching or distributed processing strategies for production-scale applications. Additionally, ensure that you have appropriate error handling and logging in place to manage potential API failures or network issues.

Step 3: Create a Delta Table for Video Embeddings

Now, create a source Delta Table to store video metadata and the embeddings generated by Twelve Labs Embed API. This table will serve as the foundation for a Vector Search index in Databricks Mosaic AI Vector Search.

First, create a source DataFrame with video URLs and metadata:

from pyspark.sql import Row # Create a list of sample video URLs and metadata video_data = [ Row(url='http://commondatastorage.googleapis.com/gtv-videos-bucket/sample/ElephantsDream.mp4', title='Elephant Dream'), Row(url='http://commondatastorage.googleapis.com/gtv-videos-bucket/sample/Sintel.mp4', title='Sintel'), Row(url='http://commondatastorage.googleapis.com/gtv-videos-bucket/sample/BigBuckBunny.mp4', title='Big Buck Bunny') ] # Create a DataFrame from the list source_df = spark.createDataFrame(video_data) source_df.show()

Next, declare the schema for the Delta table using SQL:

%sql CREATE TABLE IF NOT EXISTS videos_source_embeddings ( id BIGINT GENERATED BY DEFAULT AS IDENTITY, url STRING, title STRING, embedding ARRAY<FLOAT> ) TBLPROPERTIES (delta.enableChangeDataFeed = true)

Note that Change Data Feed has been enabled on the table, which is crucial for creating and maintaining the Vector Search index.

Now, generate embeddings for your videos using the get_video_embeddings function defined earlier:

embeddings_df = source_df.withColumn("embedding", get_video_embeddings("url"))

This step may take some time, depending on the number and length of your videos.

With your embeddings generated, now you can write the data to your Delta Table:

embeddings_df.write.mode("append").saveAsTable("videos_source_embeddings")

Finally, verify your data by displaying the DataFrame with embeddings:

display(embeddings_df)

This step creates a robust foundation for Vector Search capabilities. The Delta Table will automatically stay in sync with the Vector Search index, ensuring that any updates or additions to our video dataset are reflected in your search results.

Some key points to remember:

The

idcolumn is auto-generated, providing a unique identifier for each video.The

embeddingcolumn stores the high-dimensional vector representation of each video, generated by Twelve Labs Embed API.Enabling Change Data Feed allows Databricks to efficiently track changes in the table, which is crucial for maintaining an up-to-date Vector Search index.

Step 4: Configure Mosaic AI Vector Search

In this step, set up Databricks Mosaic AI Vector Search to work with video embeddings. This involves creating a Vector Search endpoint and a Delta Sync Index that will automatically stay in sync with your videos_source_embeddings Delta table.

First, create a Vector Search endpoint:

from databricks.vector_search.client import VectorSearchClient # Initialize the Vector Search client and name the endpoint mosaic_client = VectorSearchClient() endpoint_name = "twelve_labs_video_endpoint" # Delete the existing endpoint if it exists try: mosaic_client.delete_endpoint(endpoint_name) print(f"Deleted existing endpoint: {endpoint_name}") except Exception: pass # Ignore non-existing endpoints # Create the new endpoint endpoint = mosaic_client.create_endpoint( name=endpoint_name, endpoint_type="STANDARD" )

This code creates a new Vector Search endpoint or replaces an existing one with the same name. The endpoint will serve as the access point for your Vector Search operations.

Next, create a Delta Sync Index that will automatically stay in sync with your videos_source_embeddings Delta table:

# Define the source table name and index name source_table_name = "twelvelabs.default.videos_source_embeddings" index_name = "twelvelabs.default.video_embeddings_index" index = mosaic_client.create_delta_sync_index( endpoint_name="twelve_labs_video_endpoint", source_table_name=source_table_name, index_name=index_name, primary_key="id", embedding_dimension=1024, embedding_vector_column="embedding", pipeline_type="TRIGGERED" ) print(f"Created index: {index.name}")

This code creates a Delta Sync Index that links to your source Delta table. If you want the index to automatically update within seconds of changes made to the source table (ensuring your Vector Search results are always up-to-date), then set pipeline_type="CONTINUOUS".

To verify that the index has been created and is syncing correctly, use the following code to trigger the sync:

# Check the status of the index; this may take some time index_status = mosaic_client.get_index( endpoint_name="twelve_labs_video_endpoint", index_name="twelvelabs.default.video_embeddings_index" ) print(f"Index status: {index_status}") # Manually trigger the index sync try: index.sync() print("Index sync triggered successfully.") except Exception as e: print(f"Error triggering index sync: {str(e)}")

This code allows you to check the status of your index and manually trigger a sync if needed. In production, you may prefer to set the pipeline to sync automatically based on changes to the source Delta table.

Key points to remember:

The Vector Search endpoint serves as the access point for Vector Search operations.

The Delta Sync Index automatically stays in sync with the source Delta table, ensuring up-to-date search results.

The

embedding_dimensionshould match the dimension of the embeddings generated by Twelve Labs' Embed API (1024).The

primary_keyis set to "id", which should correspond to the unique identifier in our source table.The

embedding_vector_columnis set to "embedding," which should match the column name in our source table containing the video embeddings.

Step 5: Implementing Similarity Search

The next step is to implement similarity search functionality using your configured Mosaic AI Vector Search index and Twelve Labs Embed API. This will allow you to find videos similar to a given text query by leveraging the power of multimodal embeddings.

First, define a function to get the embedding for a text query using Twelve Labs Embed API:

def get_text_embedding(text_query): # Twelve Labs Embed API supports text-to-embedding text_embedding = twelvelabs_client.embed.create( engine_name="Marengo-retrieval-2.6", text=text_query, text_truncate="start" ) return text_embedding.text_embedding.float

This function takes a text query and returns its embedding using the same model as video embeddings, ensuring compatibility in the vector space.

Next, implement the similarity search function:

def similarity_search(query_text, num_results=5): # Initialize the Vector Search client and get the query embedding mosaic_client = VectorSearchClient() query_embedding = get_text_embedding(query_text) print(f"Query embedding generated: {len(query_embedding)} dimensions") # Perform the similarity search results = index.similarity_search( query_vector=query_embedding, num_results=num_results, columns=["id", "url", "title"] ) return results

This function takes a text query and the number of results to return. It generates an embedding for the query, and then uses the Mosaic AI Vector Search index to find similar videos.

To parse and display the search results, use the following helper function:

def parse_search_results(raw_results): try: data_array = raw_results['result']['data_array'] columns = [col['name'] for col in raw_results['manifest']['columns']] return [dict(zip(columns, row)) for row in data_array] except KeyError: print("Unexpected result format:", raw_results) return []

Now, put it all together and perform a sample search:

# Example usage query = "A dragon" raw_results = similarity_search(query) # Parse and print the search results search_results = parse_search_results(raw_results) if search_results: print(f"Top {len(search_results)} videos similar to the query: '{query}'") for i, result in enumerate(search_results, 1): print(f"{i}. Title: {result.get('title', 'N/A')}, URL: {result.get('url', 'N/A')}, Similarity Score: {result.get('score', 'N/A')}") else: print("No valid search results returned.")

This code demonstrates how to use Twelve Labs’ similarity search function to find videos related to the query "A dragon". It then parses and displays the results in a user-friendly format.

Key points to remember:

The

get_text_embeddingfunction uses the same Twelve Labs model as our video embeddings, ensuring compatibility.The

similarity_searchfunction combines text-to-embedding conversion with Vector Search to find similar videos.Error handling is crucial, as network issues or API changes could affect the search process.

The

parse_search_resultsfunction helps convert the raw API response into a more usable format.You can adjust the

num_resultsparameter in thesimilarity_searchfunction to control the number of results returned.

This implementation enables powerful semantic search capabilities across your video dataset. Users can now find relevant videos using natural language queries, leveraging the rich multimodal embeddings generated by Twelve Labs Embed API.

Step 6: Build a Video Recommendation System

Now, it’s time to create a basic video recommendation system using the multimodal embeddings generated by Twelve Labs Embed API and Databricks Mosaic AI Vector Search. This system will suggest videos similar to a given video based on their embedding similarities.

First, implement a simple recommendation function:

def get_video_recommendations(video_id, num_recommendations=5): # Initialize the Vector Search client mosaic_client = VectorSearchClient() # First, retrieve the embedding for the given video_id source_df = spark.table("videos_source_embeddings") video_embedding = source_df.filter(f"id = {video_id}").select("embedding").first() if not video_embedding: print(f"No video found with id: {video_id}") return [] # Perform similarity search using the video's embedding try: results = index.similarity_search( query_vector=video_embedding["embedding"], num_results=num_recommendations + 1, # +1 to account for the input video columns=["id", "url", "title"] ) # Parse the results recommendations = parse_search_results(results) # Remove the input video from recommendations if present recommendations = [r for r in recommendations if r.get('id') != video_id] return recommendations[:num_recommendations] except Exception as e: print(f"Error during recommendation: {e}") return [] # Helper function to display recommendations def display_recommendations(recommendations): if recommendations: print(f"Top {len(recommendations)} recommended videos:") for i, video in enumerate(recommendations, 1): print(f"{i}. Title: {video.get('title', 'N/A')}") print(f" URL: {video.get('url', 'N/A')}") print(f" Similarity Score: {video.get('score', 'N/A')}") print() else: print("No recommendations found.") # Example usage video_id = 1 # Assuming this is a valid video ID in your dataset recommendations = get_video_recommendations(video_id) display_recommendations(recommendations)

This implementation does the following:

The

get_video_recommendationsfunction takes a video ID and the number of recommendations to return.It retrieves the embedding for the given video from a source Delta table.

Using this embedding, it performs a similarity search to find the most similar videos.

The function removes the input video from the results (if present) to avoid recommending the same video.

The

display_recommendationshelper function formats and prints the recommendations in a user-friendly manner.

To use this recommendation system:

Ensure you have videos in your

videos_source_embeddingstable with valid embeddings.Call the

get_video_recommendationsfunction with a valid video ID from your dataset.The function will return and display a list of recommended videos based on similarity.

This basic recommendation system demonstrates how to leverage multimodal embeddings for content-based video recommendations. It can be extended and improved in several ways:

Incorporate user preferences and viewing history for personalized recommendations.

Implement diversity mechanisms to ensure varied recommendations.

Add filters based on video metadata (e.g., genre, length, upload date).

Implement caching mechanisms for frequently requested recommendations to improve performance.

Remember that the quality of recommendations depends on the size and diversity of your video dataset, as well as the accuracy of the embeddings generated by Twelve Labs Embed API. As you add more videos to your system, the recommendations should become more relevant and diverse.

Take this Integration to the Next Level

Update and Sync the Index

As your video library grows and evolves, it's crucial to keep your Vector Search index up-to-date. Mosaic AI Vector Search offers seamless synchronization with your source Delta table, ensuring that recommendations and search results always reflect the latest data.

Key considerations for index updates and synchronization:

Incremental updates: Leverage Delta Lake's change data feed to efficiently update only the modified or new records in your index.

Scheduled syncs: Implement regular synchronization jobs using Databricks workflow orchestration tools to maintain index freshness.

Real-time updates: For time-sensitive applications, consider implementing near real-time index updates using Databricks Mosaic AI streaming capabilities.

Version management: Utilize Delta Lake's time travel feature to maintain multiple versions of your index, allowing for easy rollbacks if needed.

Monitoring sync status: Implement logging and alerting mechanisms to track successful syncs and quickly identify any issues in the update process.

By mastering these techniques, you'll ensure that your Twelve Labs video embeddings are always current and readily available for advanced search and recommendation use cases.

Optimize Performance and Scaling

As your video analysis pipeline grows, it is important to continue optimizing performance and scaling your solution. Distributed computing capabilities from Databricks, combined with efficient embedding generation from Twelve Labs, provide a robust foundation for handling large-scale video processing tasks.

Consider these strategies for optimizing and scaling your solution:

Distributed processing: Leverage Databricks Spark clusters to parallelize embedding generation and indexing tasks across multiple nodes.

Caching strategies: Implement intelligent caching mechanisms for frequently accessed embeddings to reduce API calls and improve response times.

Batch processing: For large video libraries, implement batch processing workflows to generate embeddings and update indexes during off-peak hours.

Query optimization: Fine-tune Vector Search queries by adjusting parameters like

num_resultsand implementing efficient filtering techniques.Index partitioning: For massive datasets, explore index partitioning strategies to improve query performance and enable more granular updates.

Auto-scaling: Utilize Databricks auto-scaling features to dynamically adjust computational resources based on workload demands.

Edge computing: For latency-sensitive applications, consider deploying lightweight versions of your models closer to the data source.

By implementing these optimization techniques, you'll be well-equipped to handle growing video libraries and increasing user demands while maintaining high performance and cost efficiency.

Monitoring and Analytics

Implementing robust monitoring and analytics is essential to ensuring the ongoing success of your video understanding pipeline. Databricks provides powerful tools for tracking system performance, user engagement, and business impact.

Key areas to focus on for monitoring and analytics:

Performance metrics: Track key performance indicators such as query latency, embedding generation time, and index update duration.

Usage analytics: Monitor user interactions, popular search queries, and frequently recommended videos to gain insights into user behavior.

Quality assessment: Implement feedback loops to evaluate the relevance of search results and recommendations, using both automated metrics and user feedback.

Resource utilization: Keep an eye on computational resource usage, API call volumes, and storage consumption to optimize costs and performance.

Error tracking: Set up comprehensive error logging and alerting to quickly identify and resolve issues in the pipeline.

A/B testing: Utilize experimentation capabilities from Databricks to test different embedding models, search algorithms, or recommendation strategies.

Business impact analysis: Correlate video understanding capabilities with key business metrics like user engagement, content consumption, or conversion rates.

Compliance monitoring: Ensure your video processing pipeline adheres to data privacy regulations and content moderation guidelines.

By implementing a comprehensive monitoring and analytics strategy, you'll gain valuable insights into your video understanding pipeline's performance and impact. This data-driven approach will enable continuous improvement and help you demonstrate the value of integrating advanced video understanding capabilities from Twelve Labs with the Databricks Data Intelligence Platform.

Conclusion

Twelve Labs and Databricks Mosaic AI provide a robust framework for advanced video understanding and analysis. This integration leverages multimodal embeddings and efficient Vector Search capabilities, enabling developers to construct sophisticated video search, recommendation, and analysis systems.

This tutorial has walked through the technical steps of setting up the environment, generating embeddings, configuring Vector Search, and implementing basic search and recommendation functionalities. It also addresses key considerations for scaling, optimizing, and monitoring your solution.

In the evolving landscape of video content, the ability to extract precise insights from this medium is critical. This integration equips developers with the tools to address complex video understanding tasks. We encourage you to explore the technical capabilities, experiment with advanced use cases, and contribute to the community of AI engineers advancing video understanding technology.

Additional Resources

To further explore and leverage this integration, consider the following resources:

These resources will help you stay at the forefront of video AI technology and continue to build innovative solutions using Twelve Labs and Databricks.

Short Summary

Twelve Labs Embed API enables developers to get multimodal embeddings that power advanced video understanding use cases, from semantic video search and data curation to content recommendation and video RAG systems.

With Twelve Labs, contextual vector representations can be generated that capture the relationship between visual expressions, body language, spoken words, and overall context within videos. Databricks Mosaic AI Vector Search provides a robust, scalable infrastructure for indexing and querying high-dimensional vectors.

This blog post will guide you through harnessing these complementary technologies to unlock new possibilities in video AI applications.

Big thanks to Nina Williams, Austin Zaccor, Fernanda Heredia, and Emily Hutson from Databricks for collaborating with us on this tutorial!

Why Twelve Labs + Databricks Mosaic AI?

Integrating Twelve Labs Embed API with Databricks Mosaic AI Vector Search addresses key challenges in video AI, such as efficient processing of large-scale video datasets and accurate multimodal content representation. This integration reduces development time and resource needs for advanced video applications, enabling complex queries across vast video libraries and enhancing overall workflow efficiency.

The unified approach to handling multimodal data is particularly noteworthy. Instead of juggling separate models for text, image, and audio analysis, users can now work with a single, coherent representation that captures the essence of video content in its entirety. This not only simplifies deployment architecture but also enables more nuanced and context-aware applications, from sophisticated content recommendation systems to advanced video search engines and automated content moderation tools.

Moreover, this integration extends the capabilities of the Databricks ecosystem, allowing seamless incorporation of video understanding into existing data pipelines and machine learning workflows. Whether companies are developing real-time video analytics, building large-scale content classification systems, or exploring novel applications in Generative AI, this combined solution provides a powerful foundation. It pushes the boundaries of what's possible in video AI, opening up new avenues for innovation and problem-solving in industries ranging from media and entertainment to security and healthcare.

Understanding Twelve Labs' Embed API

Twelve Labs' Embed API represents a significant advancement in multimodal embedding technology, specifically designed for video content. Unlike traditional approaches that rely on frame-by-frame analysis or separate models for different modalities, this API generates contextual vector representations that capture the intricate interplay of visual expressions, body language, spoken words, and overall context within videos. It is powered by our state-of-the-art multimodal foundation model Marengo-2.6.

The Embed API offers several key features that make it particularly powerful for AI engineers working with video data. First, it provides flexibility for any modality present in videos, eliminating the need for separate text-only or image-only models. Second, it employs a video-native approach that accounts for motion, action, and temporal information, ensuring a more accurate and temporally coherent interpretation of video content. Lastly, it creates a unified vector space that integrates embeddings from all modalities, facilitating a more holistic understanding of the video content.

For AI engineers, the Embed API opens up new possibilities in video understanding tasks. It enables more sophisticated content analysis, improved semantic search capabilities, and enhanced recommendation systems. The API's ability to capture subtle cues and interactions between different modalities over time makes it particularly valuable for applications requiring a nuanced understanding of video content, such as emotion recognition, context-aware content moderation, and advanced video retrieval systems.

Prerequisites

Before integrating Twelve Labs Embed API with Databricks Mosaic AI Vector Search, be sure you have the following prerequisites:

A Databricks account with access to create and manage workspaces. (You can sign up for a free trial at https://databricks.com/try-databricks)

Familiarity with Python programming and basic data science concepts.

A Twelve Labs API key. (Sign up at playground.twelvelabs.io)

Basic understanding of vector embeddings and similarity search concepts.

(Optional) An AWS account if using Databricks on AWS. This is not required if using Databricks on Azure or Google Cloud.

Note: The Embed API is currently in private beta but any user can request access by simply filling this form. Usually within a few hours, you will receive a confirmation email that you can now start using the Embed API.

Step 1: Set Up the Environment

To begin, set up the Databricks environment and install the necessary libraries:

1 - Create a new Databricks workspace:

Log in to your Databricks account at https://accounts.cloud.databricks.com/

Follow the steps outlined in the Databricks documentation to create a new workspace: https://docs.databricks.com/en/getting-started/index.html

2 - Create a new cluster or connect to an existing cluster:

Almost any ML cluster will work for this application. The below settings are provided for those seeking optimal price performance.

In your Compute tab, click “Create compute”

Select “Single node” and Runtime: 14.3 LTS ML non-GPU

The cluster policy and access mode can be left as the default

Select “r6i.xlarge” as the Node type

This will maximize memory utilization while only costing $0.252/hr on AWS and 1.02 DBU/hr on Databricks before any discounting

It was also one of the fastest options we tested

All other options can be left as the default

Click “Create compute” at the bottom and return to your workspace

3 - Create a new notebook in your Databricks workspace:

In your workspace, click "Create" and select "Notebook"

Name your notebook (e.g., "TwelveLabs_MosaicAI_VectorSearch_Integration")

Choose Python as the default language

4 - Install the Twelve Labs and Mosaic AI Vector Search SDKs:

In the first cell of your notebook, run the following command:

%pip install twelvelabs databricks-vectorsearch

5 - Set up Twelve Labs authentication:

In the next cell, add the following code:

from twelvelabs import TwelveLabs import os # Retrieve the API key from Databricks secrets (recommended) # You'll need to set up the secret scope and add your API key first TWELVE_LABS_API_KEY = dbutils.secrets.get(scope="your-scope", key="twelvelabs-api-key") if TWELVE_LABS_API_KEY is None: raise ValueError("TWELVE_LABS_API_KEY environment variable is not set") # Initialize the Twelve Labs client twelvelabs_client = TwelveLabs(api_key=TWELVE_LABS_API_KEY)

Note: For enhanced security, it's recommended to use Databricks secrets to store your API key rather than hardcoding it or using environment variables.

Step 2: Generate Multimodal Embeddings

Use the provided generate_embedding function to generate multimodal embeddings using Twelve Labs Embed API. This function is designed as a Pandas user-defined function (UDF) to work efficiently with Spark DataFrames in Databricks. It encapsulates the process of creating an embedding task, monitoring its progress, and retrieving the results.

Next, create a process_url function, which takes the video URL as string input and invokes a wrapper call to the Twelve Labs Embed API - returning an array<float>.

Here's how to implement and use it:

Define the UDF:

from pyspark.sql.functions import pandas_udf from pyspark.sql.types import ArrayType, FloatType from twelvelabs.models.embed import EmbeddingsTask import pandas as pd @pandas_udf(ArrayType(FloatType())) def get_video_embeddings(urls: pd.Series) -> pd.Series: def generate_embedding(video_url): twelvelabs_client = TwelveLabs(api_key=TWELVE_LABS_API_KEY) task = twelvelabs_client.embed.task.create( engine_name="Marengo-retrieval-2.6", video_url=video_url ) task.wait_for_done() task_result = twelvelabs_client.embed.task.retrieve(task.id) embeddings = [] for v in task_result.video_embeddings: embeddings.append({ 'embedding': v.embedding.float, 'start_offset_sec': v.start_offset_sec, 'end_offset_sec': v.end_offset_sec, 'embedding_scope': v.embedding_scope }) return embeddings def process_url(url): embeddings = generate_embedding(url) return embeddings[0]['embedding'] if embeddings else None return urls.apply(process_url)

Create a sample DataFrame with video URLs:

video_urls = [ "https://example.com/video1.mp4", "https://example.com/video2.mp4", "https://example.com/video3.mp4" ] df = spark.createDataFrame([(url,) for url in video_urls], ["video_url"])

Apply the UDF to generate embeddings:

df_with_embeddings = df.withColumn("embedding", get_video_embeddings(df.video_url))

Display the results:

df_with_embeddings.show(truncate=False)

This process will generate multimodal embeddings for each video URL in a DataFrame that will capture the multimodal essence of the video content, including visual, audio, and textual information.

Remember that generating embeddings can be computationally intensive and time-consuming for large video datasets. Consider implementing batching or distributed processing strategies for production-scale applications. Additionally, ensure that you have appropriate error handling and logging in place to manage potential API failures or network issues.

Step 3: Create a Delta Table for Video Embeddings

Now, create a source Delta Table to store video metadata and the embeddings generated by Twelve Labs Embed API. This table will serve as the foundation for a Vector Search index in Databricks Mosaic AI Vector Search.

First, create a source DataFrame with video URLs and metadata:

from pyspark.sql import Row # Create a list of sample video URLs and metadata video_data = [ Row(url='http://commondatastorage.googleapis.com/gtv-videos-bucket/sample/ElephantsDream.mp4', title='Elephant Dream'), Row(url='http://commondatastorage.googleapis.com/gtv-videos-bucket/sample/Sintel.mp4', title='Sintel'), Row(url='http://commondatastorage.googleapis.com/gtv-videos-bucket/sample/BigBuckBunny.mp4', title='Big Buck Bunny') ] # Create a DataFrame from the list source_df = spark.createDataFrame(video_data) source_df.show()

Next, declare the schema for the Delta table using SQL:

%sql CREATE TABLE IF NOT EXISTS videos_source_embeddings ( id BIGINT GENERATED BY DEFAULT AS IDENTITY, url STRING, title STRING, embedding ARRAY<FLOAT> ) TBLPROPERTIES (delta.enableChangeDataFeed = true)

Note that Change Data Feed has been enabled on the table, which is crucial for creating and maintaining the Vector Search index.

Now, generate embeddings for your videos using the get_video_embeddings function defined earlier:

embeddings_df = source_df.withColumn("embedding", get_video_embeddings("url"))

This step may take some time, depending on the number and length of your videos.

With your embeddings generated, now you can write the data to your Delta Table:

embeddings_df.write.mode("append").saveAsTable("videos_source_embeddings")

Finally, verify your data by displaying the DataFrame with embeddings:

display(embeddings_df)

This step creates a robust foundation for Vector Search capabilities. The Delta Table will automatically stay in sync with the Vector Search index, ensuring that any updates or additions to our video dataset are reflected in your search results.

Some key points to remember:

The

idcolumn is auto-generated, providing a unique identifier for each video.The

embeddingcolumn stores the high-dimensional vector representation of each video, generated by Twelve Labs Embed API.Enabling Change Data Feed allows Databricks to efficiently track changes in the table, which is crucial for maintaining an up-to-date Vector Search index.

Step 4: Configure Mosaic AI Vector Search

In this step, set up Databricks Mosaic AI Vector Search to work with video embeddings. This involves creating a Vector Search endpoint and a Delta Sync Index that will automatically stay in sync with your videos_source_embeddings Delta table.

First, create a Vector Search endpoint:

from databricks.vector_search.client import VectorSearchClient # Initialize the Vector Search client and name the endpoint mosaic_client = VectorSearchClient() endpoint_name = "twelve_labs_video_endpoint" # Delete the existing endpoint if it exists try: mosaic_client.delete_endpoint(endpoint_name) print(f"Deleted existing endpoint: {endpoint_name}") except Exception: pass # Ignore non-existing endpoints # Create the new endpoint endpoint = mosaic_client.create_endpoint( name=endpoint_name, endpoint_type="STANDARD" )

This code creates a new Vector Search endpoint or replaces an existing one with the same name. The endpoint will serve as the access point for your Vector Search operations.

Next, create a Delta Sync Index that will automatically stay in sync with your videos_source_embeddings Delta table:

# Define the source table name and index name source_table_name = "twelvelabs.default.videos_source_embeddings" index_name = "twelvelabs.default.video_embeddings_index" index = mosaic_client.create_delta_sync_index( endpoint_name="twelve_labs_video_endpoint", source_table_name=source_table_name, index_name=index_name, primary_key="id", embedding_dimension=1024, embedding_vector_column="embedding", pipeline_type="TRIGGERED" ) print(f"Created index: {index.name}")

This code creates a Delta Sync Index that links to your source Delta table. If you want the index to automatically update within seconds of changes made to the source table (ensuring your Vector Search results are always up-to-date), then set pipeline_type="CONTINUOUS".

To verify that the index has been created and is syncing correctly, use the following code to trigger the sync:

# Check the status of the index; this may take some time index_status = mosaic_client.get_index( endpoint_name="twelve_labs_video_endpoint", index_name="twelvelabs.default.video_embeddings_index" ) print(f"Index status: {index_status}") # Manually trigger the index sync try: index.sync() print("Index sync triggered successfully.") except Exception as e: print(f"Error triggering index sync: {str(e)}")

This code allows you to check the status of your index and manually trigger a sync if needed. In production, you may prefer to set the pipeline to sync automatically based on changes to the source Delta table.

Key points to remember:

The Vector Search endpoint serves as the access point for Vector Search operations.

The Delta Sync Index automatically stays in sync with the source Delta table, ensuring up-to-date search results.

The

embedding_dimensionshould match the dimension of the embeddings generated by Twelve Labs' Embed API (1024).The

primary_keyis set to "id", which should correspond to the unique identifier in our source table.The

embedding_vector_columnis set to "embedding," which should match the column name in our source table containing the video embeddings.

Step 5: Implementing Similarity Search

The next step is to implement similarity search functionality using your configured Mosaic AI Vector Search index and Twelve Labs Embed API. This will allow you to find videos similar to a given text query by leveraging the power of multimodal embeddings.

First, define a function to get the embedding for a text query using Twelve Labs Embed API:

def get_text_embedding(text_query): # Twelve Labs Embed API supports text-to-embedding text_embedding = twelvelabs_client.embed.create( engine_name="Marengo-retrieval-2.6", text=text_query, text_truncate="start" ) return text_embedding.text_embedding.float

This function takes a text query and returns its embedding using the same model as video embeddings, ensuring compatibility in the vector space.

Next, implement the similarity search function:

def similarity_search(query_text, num_results=5): # Initialize the Vector Search client and get the query embedding mosaic_client = VectorSearchClient() query_embedding = get_text_embedding(query_text) print(f"Query embedding generated: {len(query_embedding)} dimensions") # Perform the similarity search results = index.similarity_search( query_vector=query_embedding, num_results=num_results, columns=["id", "url", "title"] ) return results

This function takes a text query and the number of results to return. It generates an embedding for the query, and then uses the Mosaic AI Vector Search index to find similar videos.

To parse and display the search results, use the following helper function:

def parse_search_results(raw_results): try: data_array = raw_results['result']['data_array'] columns = [col['name'] for col in raw_results['manifest']['columns']] return [dict(zip(columns, row)) for row in data_array] except KeyError: print("Unexpected result format:", raw_results) return []

Now, put it all together and perform a sample search:

# Example usage query = "A dragon" raw_results = similarity_search(query) # Parse and print the search results search_results = parse_search_results(raw_results) if search_results: print(f"Top {len(search_results)} videos similar to the query: '{query}'") for i, result in enumerate(search_results, 1): print(f"{i}. Title: {result.get('title', 'N/A')}, URL: {result.get('url', 'N/A')}, Similarity Score: {result.get('score', 'N/A')}") else: print("No valid search results returned.")

This code demonstrates how to use Twelve Labs’ similarity search function to find videos related to the query "A dragon". It then parses and displays the results in a user-friendly format.

Key points to remember:

The

get_text_embeddingfunction uses the same Twelve Labs model as our video embeddings, ensuring compatibility.The

similarity_searchfunction combines text-to-embedding conversion with Vector Search to find similar videos.Error handling is crucial, as network issues or API changes could affect the search process.

The

parse_search_resultsfunction helps convert the raw API response into a more usable format.You can adjust the

num_resultsparameter in thesimilarity_searchfunction to control the number of results returned.

This implementation enables powerful semantic search capabilities across your video dataset. Users can now find relevant videos using natural language queries, leveraging the rich multimodal embeddings generated by Twelve Labs Embed API.

Step 6: Build a Video Recommendation System

Now, it’s time to create a basic video recommendation system using the multimodal embeddings generated by Twelve Labs Embed API and Databricks Mosaic AI Vector Search. This system will suggest videos similar to a given video based on their embedding similarities.

First, implement a simple recommendation function:

def get_video_recommendations(video_id, num_recommendations=5): # Initialize the Vector Search client mosaic_client = VectorSearchClient() # First, retrieve the embedding for the given video_id source_df = spark.table("videos_source_embeddings") video_embedding = source_df.filter(f"id = {video_id}").select("embedding").first() if not video_embedding: print(f"No video found with id: {video_id}") return [] # Perform similarity search using the video's embedding try: results = index.similarity_search( query_vector=video_embedding["embedding"], num_results=num_recommendations + 1, # +1 to account for the input video columns=["id", "url", "title"] ) # Parse the results recommendations = parse_search_results(results) # Remove the input video from recommendations if present recommendations = [r for r in recommendations if r.get('id') != video_id] return recommendations[:num_recommendations] except Exception as e: print(f"Error during recommendation: {e}") return [] # Helper function to display recommendations def display_recommendations(recommendations): if recommendations: print(f"Top {len(recommendations)} recommended videos:") for i, video in enumerate(recommendations, 1): print(f"{i}. Title: {video.get('title', 'N/A')}") print(f" URL: {video.get('url', 'N/A')}") print(f" Similarity Score: {video.get('score', 'N/A')}") print() else: print("No recommendations found.") # Example usage video_id = 1 # Assuming this is a valid video ID in your dataset recommendations = get_video_recommendations(video_id) display_recommendations(recommendations)

This implementation does the following:

The