Tutorials

How to find specific moments within your video using Twelve Labs simple search API

Ankit Khare

Find specific moments within your video using Twelve Labs simple search API

Find specific moments within your video using Twelve Labs simple search API

In this article

No headings found on page

Join our newsletter

Join our newsletter

Receive the latest advancements, tutorials, and industry insights in video understanding

Receive the latest advancements, tutorials, and industry insights in video understanding

Search, analyze, and explore your videos with AI.

Apr 7, 2023

15 min

Copy link to article

Recently, Twelve Labs was featured as a pioneer in multimodal AI at GTC 2023. While watching the GTC 2023 video, I observed our co-founder, Soyoung, pulling her hair out finding the segment where our company was featured. This experience motivated me to tackle the challenge of searching within videos using our very own APIs. So, here we are with my fun-filled weekend project's tutorial, where I'll guide you through the process of finding specific moments in your videos using the Twelve Labs' search API.

A simple blueprint to make searching within videos better😎

Introduction

Twelve Labs provides a multimodal foundation model in the form of a suite of APIs, designed to assist you in creating applications that leverage the power of video understanding. In this blog post, we'll explore how you can use the Twelve Labs API to seamlessly find specific moments of interest in your video with natural language queries. I'll upload an entertaining video from my local drive, which I put together during my graduate school days, titled "Machine Learning is Everywhere." True to its name, this 80-second video illustrates the ubiquity of ML in all aspects of life. The video highlights ML applications, such as a professional ping pong player competing against a Kuka robot, a guy performing a skateboard trick with ML being used for summarizing the event, and more. With the help of Twelve Labs API, I'll demo how you could find a specific scene within a video using simple natural language queries.

In this tutorial, I aim to provide a gentle introduction to the simple search API, so I've kept it minimalistic by focusing on searching for moments within a single video and creating a demo app using the lightweight and user-friendly Flask framework. However, the platform is more than capable of scaling to accommodate uploading hundreds or even thousands of videos and finding specific moments within them. Let's dive in and gear up for some serious fun!

Quick overview

Prerequisites: Sign up to use Twelve Labs API suite and install the necessary packages to create the demo application

Video Upload: Got some awesome videos? Send them over to the Twelve Labs platform, and watch how it efficiently indexes them, making your search experience a breeze!

Semantic Video Search: Hunt for those memorable moments in your videos with simple natural language queries

Crafting a Demo App: Craft a nifty python script that uses Flask to render your HTML template and then design a sleek HTML page to display your search results

💡 By the way, if you're reading this post and you're not a developer, fear not! I've included a link to a ready-made Jupyter Notebook, allowing you to run the entire process and obtain the results. Additionally, check out our Playground to experience the power of semantic video search without writing a single line of code. Reach out to me if you need free credits😄.

Prerequisites

In this tutorial, we'll be using a Jupyter Notebook. I'm assuming you've already set up Jupyter, Python, and Pip on your local computer. If you run into any issues, please come and holla at me for help on our Discord server, where we have quickest response times 🚅🏎️⚡️. If Discord isn't your thing, you can also reach out to me via email. After creating a Twelve Labs account, you can access the API Dashboard and obtain your API key. This demo will use an existing account. To make API calls, simply use your secret key and specify the API URL. Additionally, you can use environment variables to pass configuration details to your application:

<pre><code class="bash">%env API_KEY=<your_API_key> %env API_URL=https://api.twelvelabs.io/v1.1 </code></pre>

Installing the dependencies:

<pre><code class="python">pip install requests pip install flask </code></pre>

Video upload

In our first step, I will show you how I uploaded a video from my local computer to the Twelve Labs platform to leverage its video understanding capabilities.

Imports:

<pre><code class="python">import os import requests import glob from pprint import pprint </code></pre

Retrieve the URL of the API and my API key as follows:

<pre><code class="python">API_URL = os.getenv("API_URL") assert API_URL </code></pre

<pre><code class="python">API_KEY = os.getenv("API_KEY") assert API_KEY </code></pre

Index API

The next step involves using the Index API to create a video index. A video index is a way to group one or more videos together and set some common search properties, thereby allowing you to perform semantic searches on the videos uploaded to the index.

An index is defined by the following fields:

<ul> <li>A <b>name</b></li> <li>An <b>engine</b> - currently, we offer Marengo2, our latest multimodal foundation model for video understanding</li> <li>One or more <b>indexing options</b>:</li> <ul> <li><b>visual</b>: If this option is selected, the API service performs a multimodal audio-visual analysis of your videos and allows you to search by objects, actions, sounds, movements, places, situational events, and complex audio-visual text descriptions. Some examples of visual searches could include a crowd cheering or tired developers leaving an office😆.</li> <li><b>conversation</b>: When this option is chosen, the API extracts a description from the video (transcript) and carries out a semantic NLP analysis on the transcript. This enables you to pinpoint the precise moments in your video where the conversation you're searching for takes place. An example of searching within the conversation that takes place in the indexed videos could be the moment you lied to your sibling😜.</li> <li><b>text_in_video</b>: When this option is selected, the API service carries out text recognition (OCR) allowing you to search for text that appears in your videos, such as signs, labels, subtitles, logos, presentations, and documents. In this case, you might search for brands that appear during a football match🏈.</li></ul></ul>

Creating an index:

<pre><code class="python"># Construct the URL of the `/indexes` endpoint INDEXES_URL = f"{API_URL}/indexes" # Specify the name of the index INDEX_NAME = "My University Days" # Set the header of the request headers = { "x-api-key": API_KEY } # Declare a dictionary named data data = { "engine_id": "marengo2", "index_options": ["visual", "conversation", "text_in_video"], "index_name": INDEX_NAME, } # Create an index response = requests.post(INDEXES_URL, headers=headers, json=data) # Store the unique identifier of your index INDEX_ID = response.json().get('_id') # Print the status code and response print(f'Status code: {response.status_code}') pprint(response.json()) </code></pre

Task API to upload a video

The Twelve Labs platform offers a Task API to upload videos into the created index and monitor the status of the upload process:

<pre><code class="python">TASKS_URL = f"{API_URL}/tasks" file_name = "Machine Learning is Everywhere" # indexed video will have this file name file_path = "ml.mp4" # file name of the video being uploaded file_stream = open(file_path,"rb") data = { "index_id": INDEX_ID, "language": "en" } file_param = [ ("video_file", (file_name, file_stream, "application/octet-stream")),] response = requests.post(TASKS_URL, headers=headers, data=data, files=file_param </code></pre

Once you upload a video, the system automatically initiates video indexing process. Twelve Labs discuss the concept of "video indexing" in relation to using a multimodal foundation model to incorporate temporal context and extract information such as movements, objects, sounds, text on screen, and speech from your videos, generating powerful video embeddings. This subsequently allow you to find specific moments within your videos using everyday language or to categorize video segments based on provided labels and prompts.

Monitoring the video indexing process:

<pre><code class="python">import time # Define starting time start = time.time() print("Start uploading video") # Monitor the indexing process TASK_STATUS_URL = f"{API_URL}/tasks/{TASK_ID}" while True: response = requests.get(TASK_STATUS_URL, headers=headers) STATUS = response.json().get("status") if STATUS == "ready": print(f"Status code: {STATUS}") break time.sleep(10) # Define ending time end = time.time() print("Finish uploading video") print("Time elapsed (in seconds): ", end - start) </code></pre

<pre><code class="python"># Retrieve the unique identifier of the video VIDEO_ID = response.json().get('video_id') # Print the status code, the unique identifier of the video, and the response print(f"VIDEO ID: {VIDEO_ID}") pprint(response.json()) </code></pre

<pre><code class="language-plaintext">VIDEO ID: 642621ffffa3551fb6d2f### {'_id': '642621fc3205dc8a48ba8###', 'created_at': '2023-03-30T23:57:48.877Z', 'estimated_time': '2023-03-30T23:59:58.312Z', 'index_id': '###621fb7b1f2230dfcd6###', 'metadata': {'duration': 80.32, 'filename': 'Machine Learning is Everywhere', 'height': 720, 'width': 1280}, 'status': 'ready', 'type': 'index_task_info', 'updated_at': '2023-03-31T00:00:34.412Z', 'video_id': '642621ffffa3551fb6d2f###'} </code></pre

Creating another environment variables to pass the unique identifier of the existing index for our app:

<pre><code class="python">%env ENV_INDEX_ID = ###621fb7b1f2230dfcd6### </code></pre

Here's a list of all the videos in the index. For now, we've only indexed one video to keep things simple, but you can upload up to 10 hours of video content using our free credits:

<pre><code class="python"># Retrieve the unique identifier of the existing index INDEX_ID = os.getenv("ENV_INDEX_ID") # Set the header of the request headers = { "x-api-key": API_KEY, } # List all the videos in an index INDEXES_VIDEOS_URL = f"{API_URL}/indexes/{INDEX_ID}/videos" response = requests.get(INDEXES_VIDEOS_URL, headers=headers) print(f'Status code: {response.status_code}') pprint(response.json()) </code></pre

<pre><code class="python">Status code: 200 {'data': [{'_id': '642621ffffa3551fb6d2f###', 'created_at': '2023-03-30T23:57:48Z', 'metadata': {'duration': 80.32, 'engine_id': 'marengo2', 'filename': 'Machine Learning is Everywhere', 'fps': 25, 'height': 720, 'size': 11877525, 'width': 1280}, 'updated_at': '2023-03-30T23:57:51Z'}], 'page_info': {'limit_per_page': 10, 'page': 1, 'total_duration': 80.32, 'total_page': 1, 'total_results': 1}} </code></pre

Semantic video search, aka finding specific moments

Once the system completes indexing the video and generating video embeddings, you can leverage them to find specific moments using the search API. This API identifies the exact start-end time codes in relevant videos that correspond to the semantic meaning of the query you enter. Depending on the indexing options you selected, you'll have a subset of the same options to choose from for your semantic video search. For instance, if you enabled all the options for the index, you'll have the ability to search: audio-visually; for conversations; and for any text appearing within the videos. The reason for providing the same set of options at both the index and search levels is to offer you the flexibility to decide how you'd like to utilize the platform for analyzing your video content and how you'd want to search across your video content using a combination of options you find suitable for your current context.

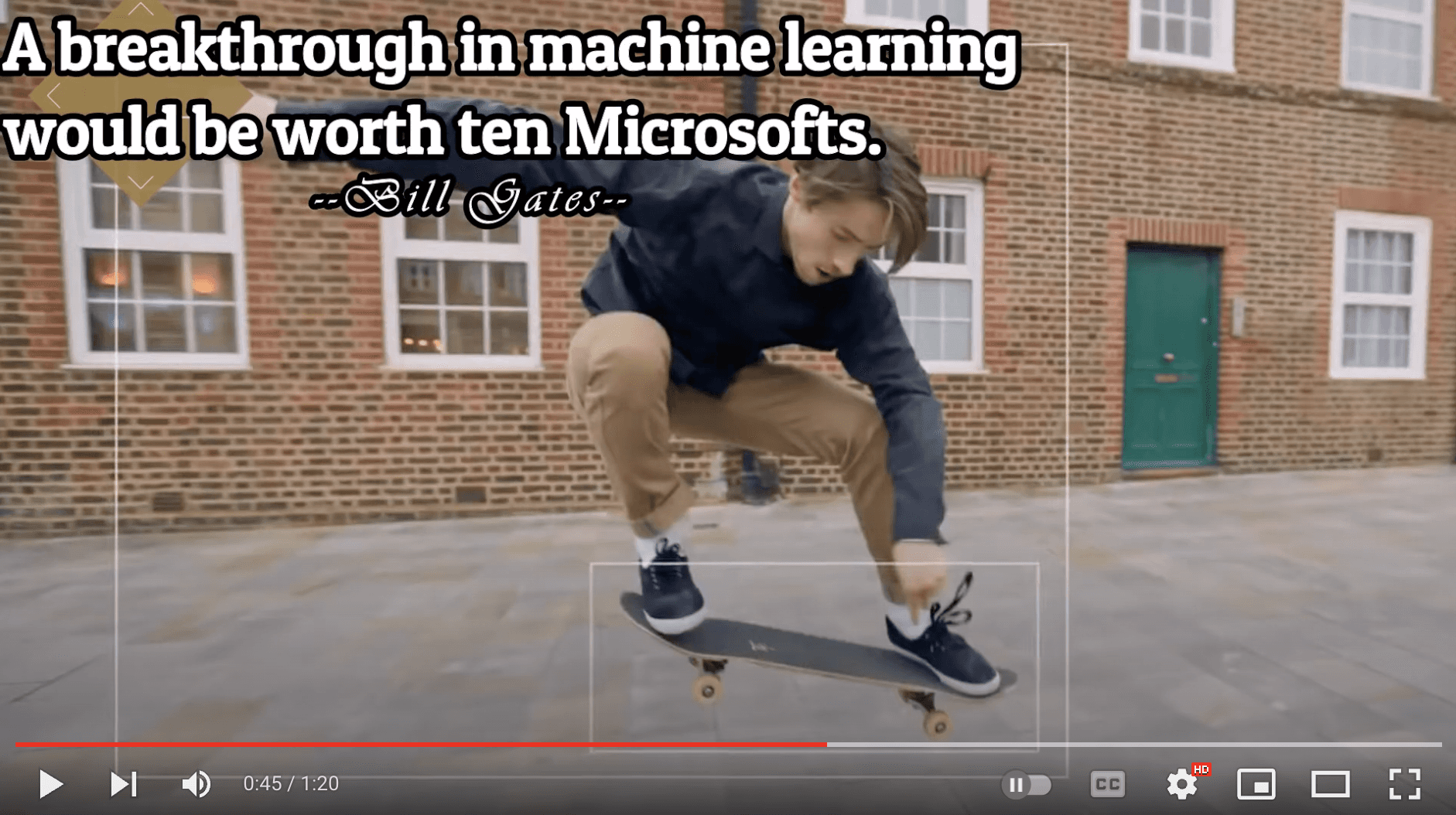

Let’s start with a visual search using a simple natural language query, “a guy doing a trick on a skateboard”:

<pre><code class="bash">Status code: 200 {'data': [{'confidence': 'high', 'end': 49.34375, 'metadata': [{'type': 'visual'}], 'score': 83.24, 'start': 41.65625, 'video_id': '642621ffffa3551fb6d2f###'}], 'page_info': {'limit_per_page': 10, 'page_expired_at': '2023-03-31T22:41:42Z', 'total_results': 1}, 'search_pool': {'index_id': '642621fb7b1f2230dfcd####', 'total_count': 1, 'total_duration': 80}} </code></pre

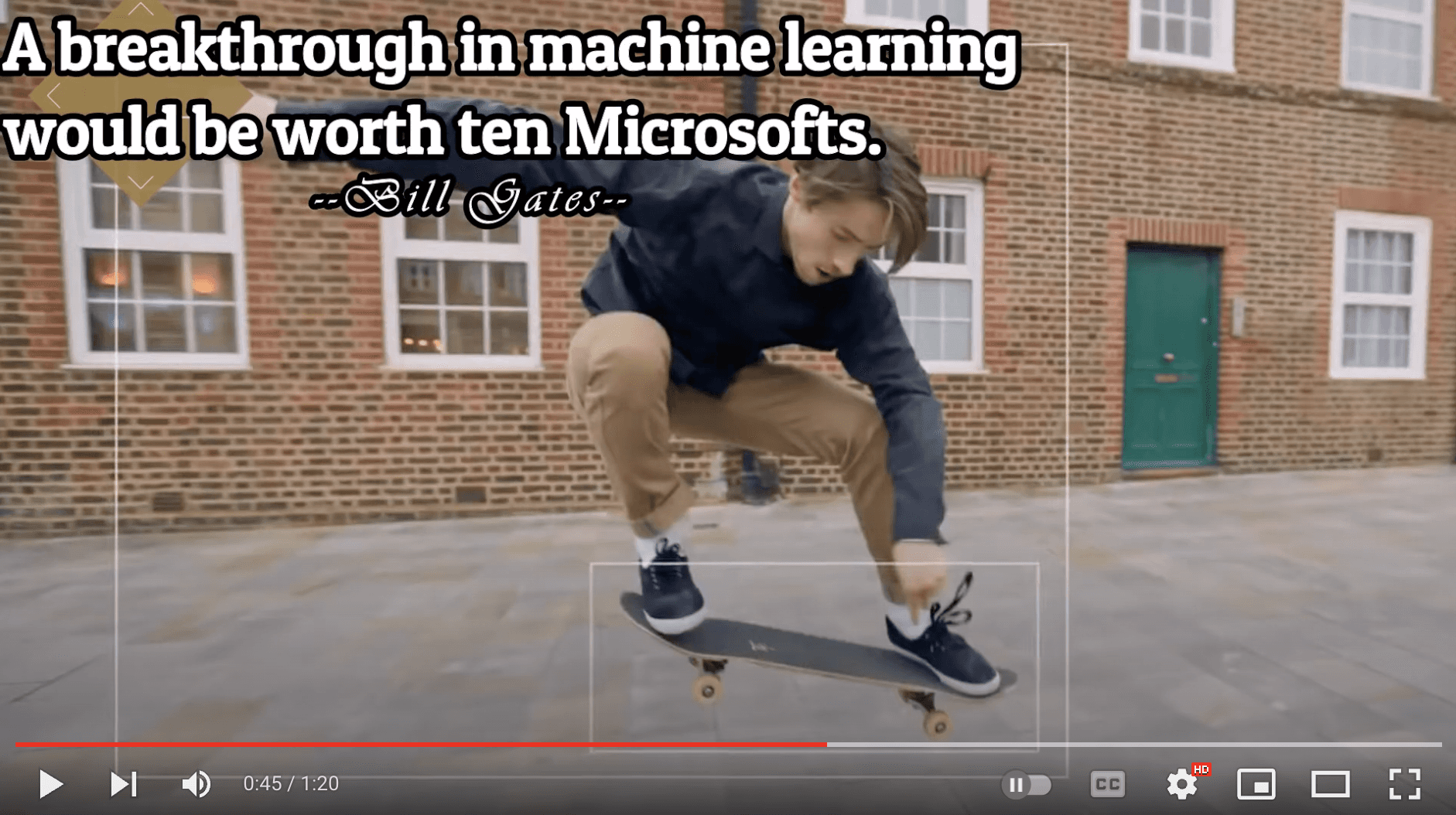

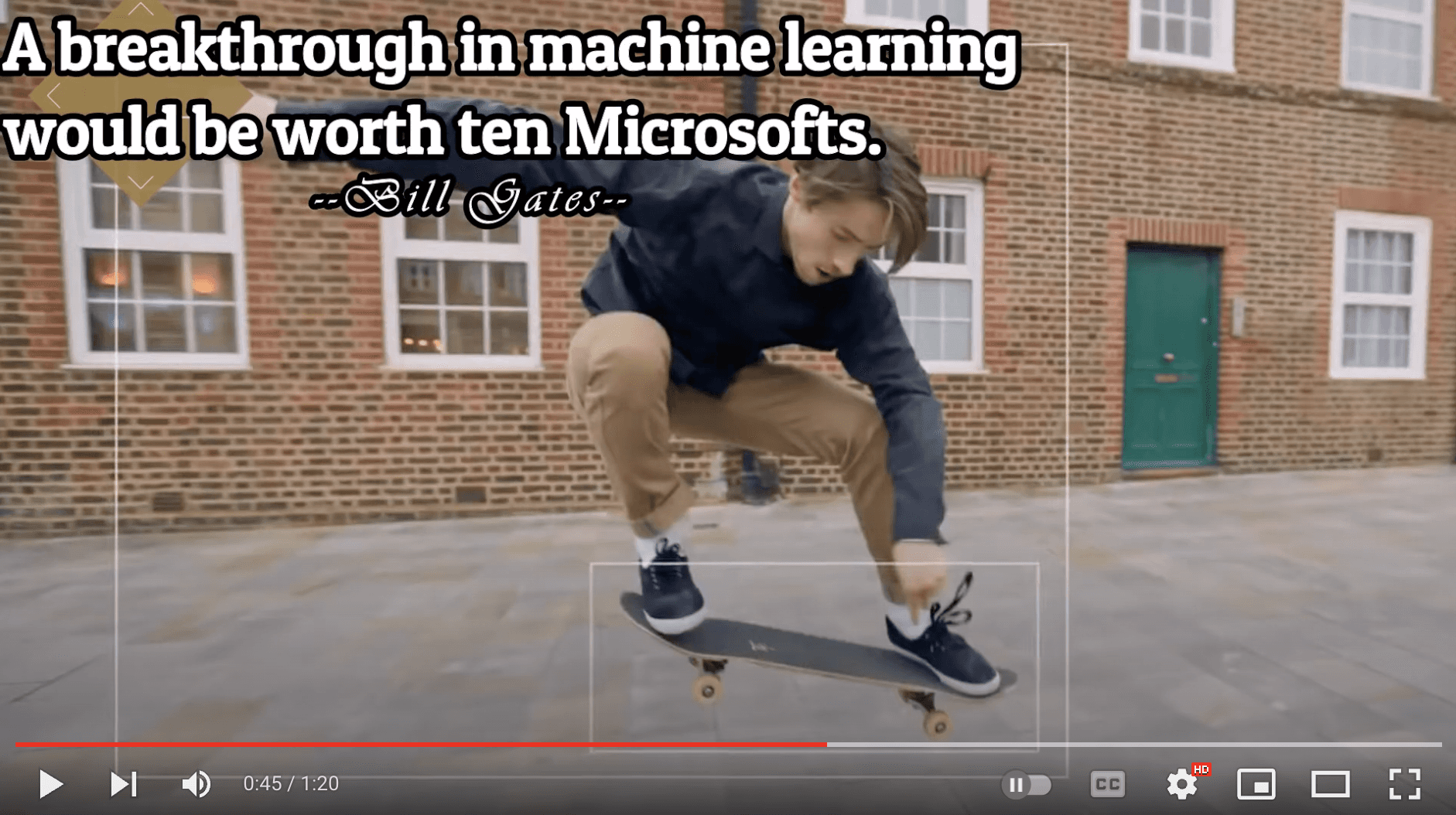

Corresponding video segment:

This part gets me super pumped because it showcases the model's human-like understanding of the video content. As you can see in the above screenshot, the system nails it by pinpointing the exact moment I wanted to extract.

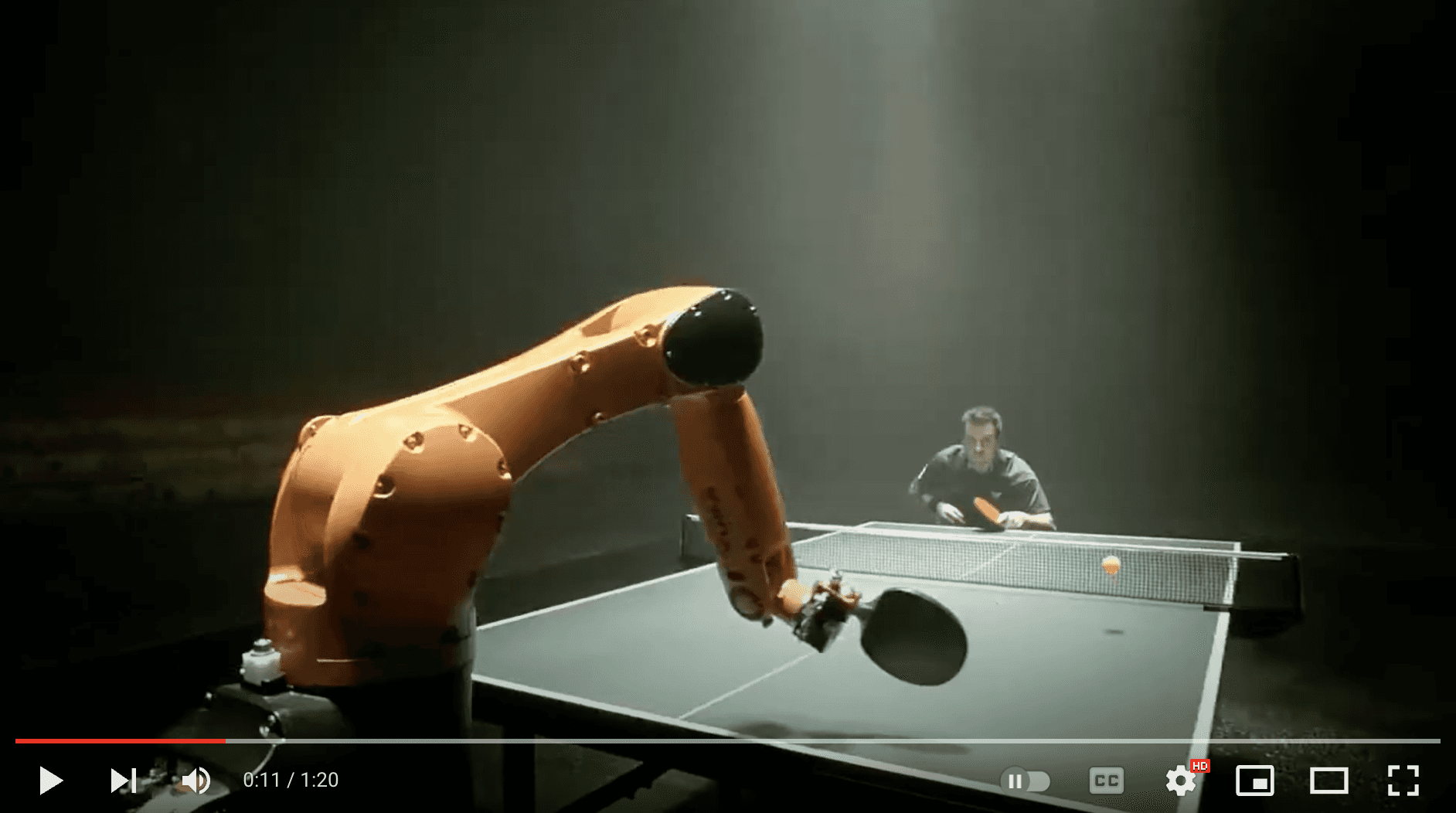

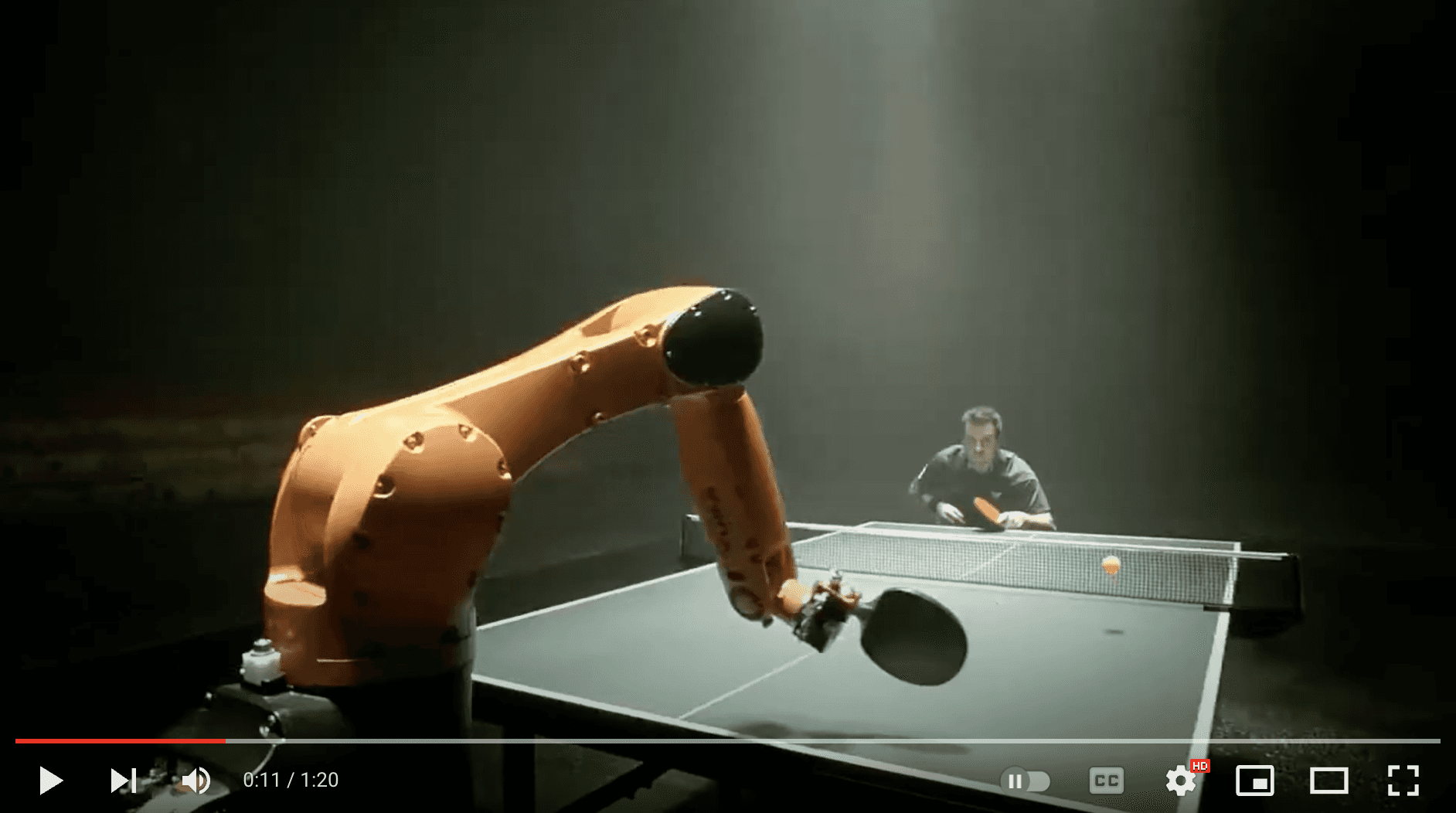

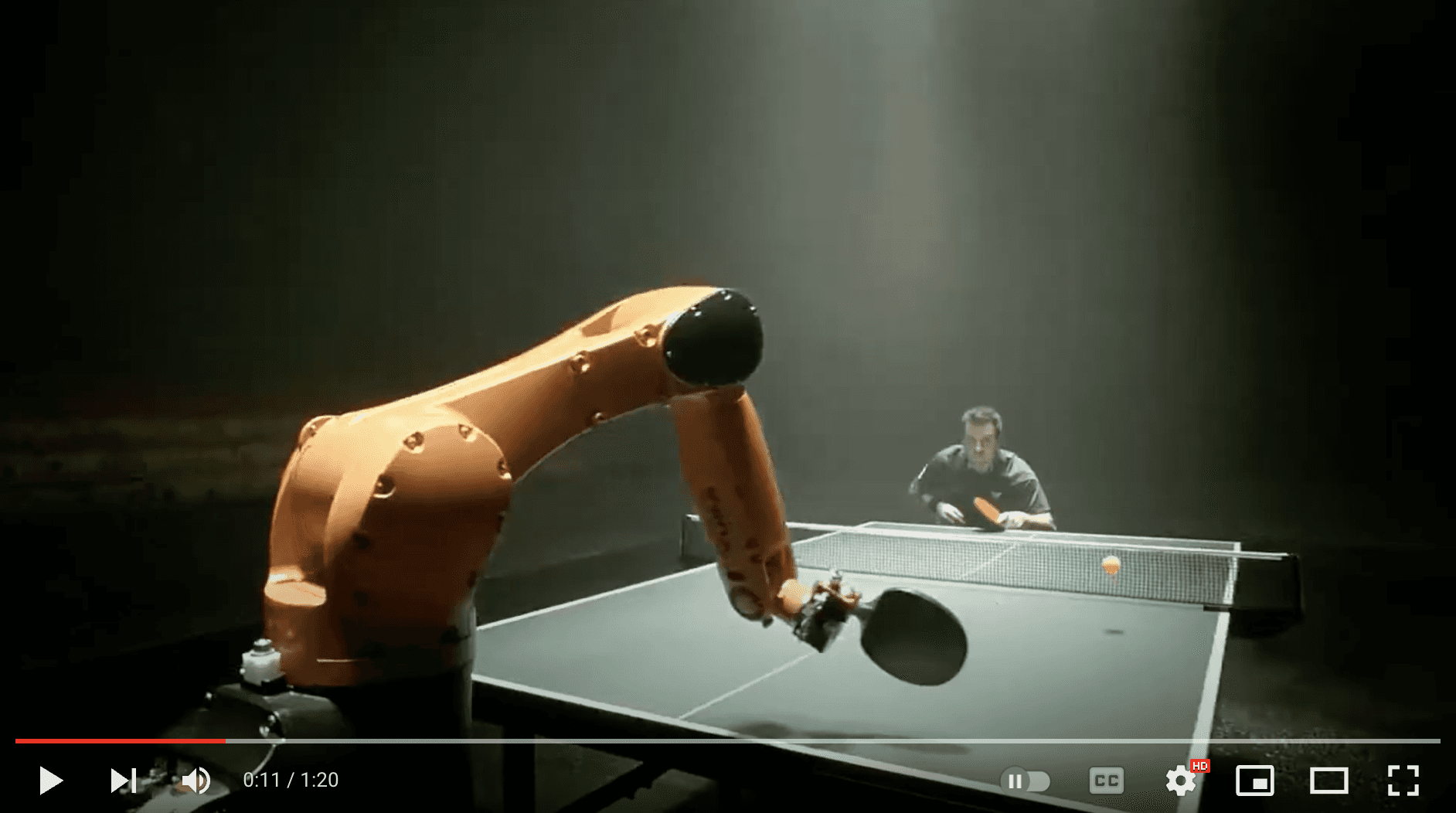

Let's give another query a shot, "a guy playing table tennis with a robotic arm" and witness the system work its magic once more:

<pre><code class="python"># Construct the URL of the `/search` endpoint SEARCH_URL = f"{API_URL}/search/" # Set the header of the request headers = { "x-api-key": API_KEY } query = "a guy playing table tennis with a robotic arm" # Declare a dictionary named `data` data = { "query": query, # Specify your search query "index_id": INDEX_ID, # Indicate the unique identifier of your index "search_options": ["visual"], # Specify the search options } # Make a search request response = requests.post(SEARCH_URL, headers=headers, json=data) print(f"Status code: {response.status_code}") pprint(response.json()) </code></pre

Output:

<pre><code class="python">{'data': [{'confidence': 'high', 'end': 14.6875, 'metadata': [{'type': 'visual'}], 'score': 90.62, 'start': 9.75, 'video_id': '642621ffffa3551fb6d2####'}, {'confidence': 'high', 'end': 9.75, 'metadata': [{'type': 'visual'}], 'score': 89.74, 'start': 4.5, 'video_id': '642621ffffa3551fb6d2####'}], </code></pre

Corresponding video segments:

Bingo! Once again, the system pinpointed those fascinating moments spot-on.

💡Here's a fun little task for you: search for "a breakthrough in machine learning would be worth ten Microsofts" and set the search option to: ["text_in_video"].

In order to make the most of these JSON responses without manually checking the start and end points, we need a stunning index page crafted with love. That way, we can send the search request's JSON output straight to the corresponding video. Let's get to it!

Crafting a demo app

Kudos for sticking with me on this awesome video understanding adventure 🎉🥳👏! We've reached the final step where we'll craft a Flask-based, straightforward app that takes the search results from our previous steps and presents them on a beautiful web page, showcasing the exact moments we requested. By the way, I chose Flask since I come from a data science background and I love Python. Moreover, Flask is a lightweight Python-based framework that aligns with my needs for this tutorial. However, you're welcome to select any framework that caters to your preferences and requirements.

First step is to have the necessary imports on our Jupyter Notebook:

<pre><code class="python">import json import pickle </code></pre

We'll be generating two lists - "starts" and "ends" - that hold all the starting and ending timestamps gathered from the search API:

<pre><code class="python">data = response.json() starts = [] ends = [] for item in data['data']: starts.append(item['start']) ends.append(item['end']) print("starts:", starts) print("ends:", ends) </code></pre

<pre><code class="bash">starts: [34.90625, 0, 62.5625] ends: [39.21875, 4.5, 65.90625] </code></pre

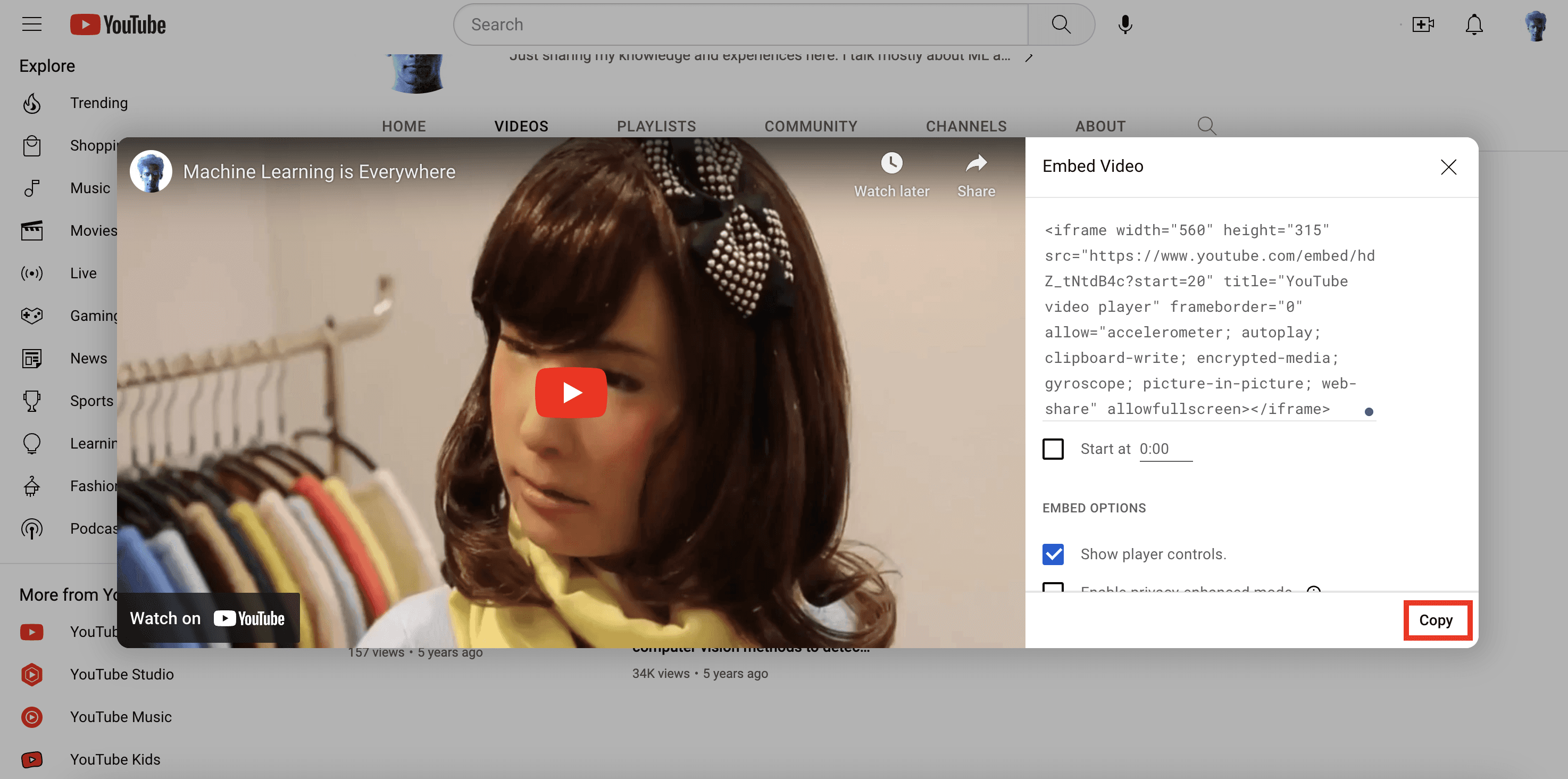

Now that I've got the required timestamps and the same videos uploaded on my YouTube channel, there are a couple of ways to use them. I could either grab the video from my local disk and display my favorite clips on the web page, or I could simply use the video URL from my YouTube channel to achieve the same result. I find the latter more appealing, so I'll use the YouTube embed code for the same video I uploaded and pass the start and end timestamps to it. This way, the exact video segments I searched for will be displayed. Just a minor heads up – the YouTube embed code only supports integer values for the start and end parameters, so we'll need to round these values:

<pre><code class="bash">starts_int = [int(f) for f in starts] ends_int = [int(f) for f in ends] </code></pre

Let's quickly pickle these lists along with the query we entered. This will be useful when we pass them to the Flask app file we're preparing to create:

<pre><code class="python">with open("lists.pkl", "wb") as f: pickle.dump((starts_int, ends_int, query), f) </code></pre>

Voilà! We're all set to work with Flask and pass these parameters.

Steps to create Flask app

1. Create a new Flask project: create a new directory for the project and create a new Python file that will serve as the main file for your Flask app.

<pre><code class="bash">mkdir my_flask_app cd my_flask_app touch app.py </code></pre>

Once we have the Flask app file and the template ready, the directory structure will look like this:

<pre><code class="markdown">my_flask_app/ │ app.py │ ml.mp4 │ └───templates/ │ index.html </code></pre>

Keep the video file you will upload within the my_flask_app directory.

2. Write the Flask app code: In the app.py file, we need to write the code for our Flask app. Here is the Flask app that uses Jinja2 templates and renders the 'index.html' file where our lists of timestamps are being utilized:

<pre><code class="python">from flask import Flask, render_template import pickle with open("lists.pkl", "rb") as f: starts, ends, query = pickle.load(f) app = Flask(__name__) @app.route("/") def index(): return render_template("index.html", starts=starts, ends=ends, query=query) if __name__ == "__main__": app.run(debug=True) </code></pre

3. Create a templates directory: To use Jinja2 templates with Flask, we need to create a templates directory in the same directory as our Flask app. In this directory, we will store your Jinja2 templates:

<pre><code class="bash">mkdir templates </code></pre>

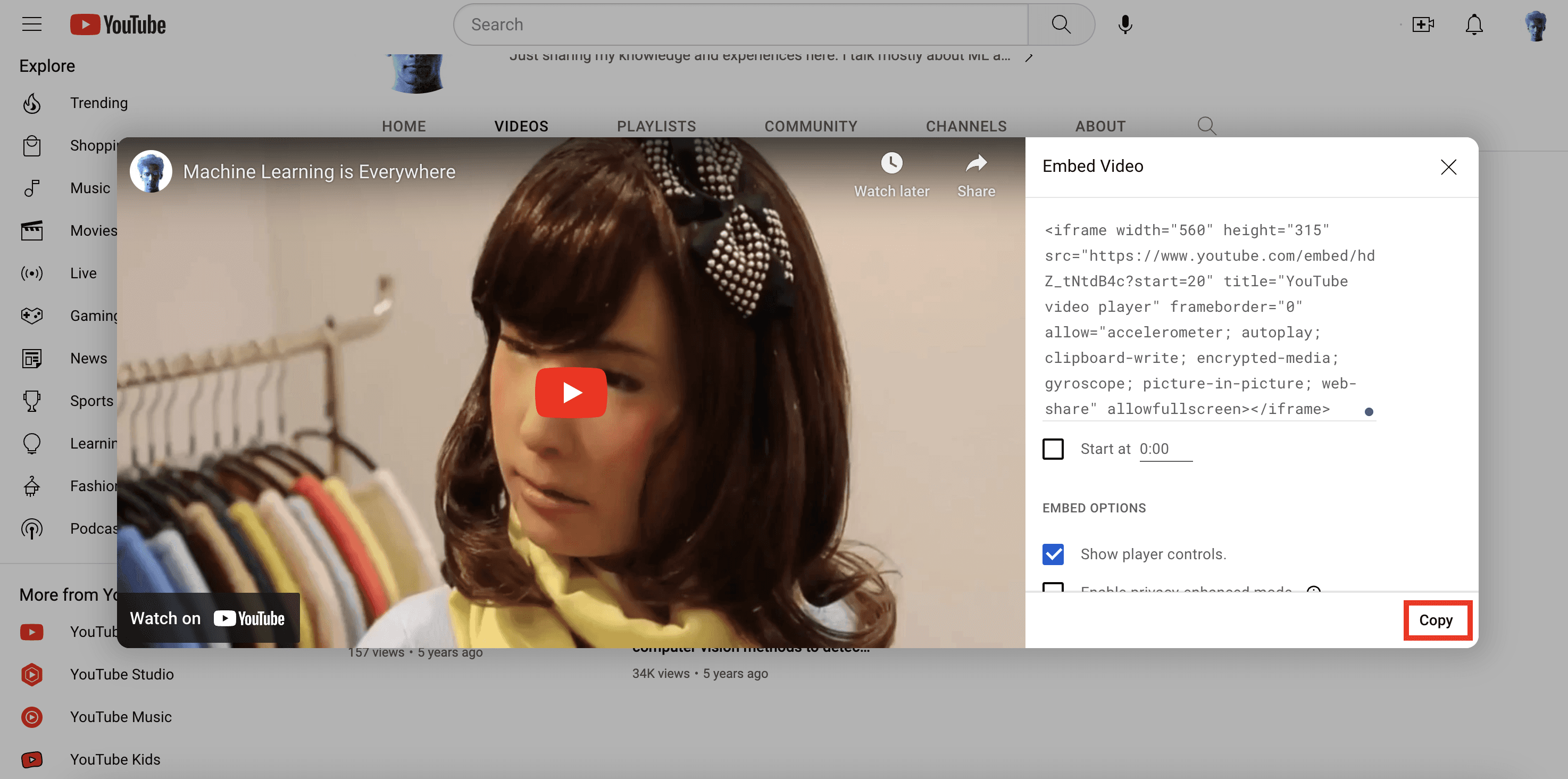

The final piece of the puzzle is the index.html page that will display all the video segments that matched the search query. Before we work on the HTML file, let’s quickly grab the Embed Video code from my YouTube channel:

4. Create a Jinja2 template: To create a Jinja2 template, we need to create an HTML file in the templates directory:

<pre><code class="bash">touch index.html </code></pre>

Here is a simple example of a Jinja2 template. It incorporate the code within the HTML file that lets us iterate through the lists and the query string we passed from the app file:

<pre><code class="language-html"><html> <head> <style> body { background-color: #F2F2F2; font-family: Arial, sans-serif; text-align: center; } h1 { margin-top: 40px; } .video-container { display: flex; flex-wrap: wrap; padding: 40px; justify-content: center; } .video-item { display: flex; flex-direction: column; align-items: center; width: 50%; height: 600px; margin: 20px; text-align: center; } .video-item iframe { width: 80%; height: 380px; margin: 20px; } .video-item p { font-size: 16px; margin-top: 10px; font-weight: bold; } </style> </head> <body> <h1>My Favorite Scenes</h1> <div class="video-container"> {% for i in range(starts|length) %} <div class="video-item"> <iframe width="560" height="315" src="https://www.youtube.com/embed/hdZ_tNtdB4c?start={{ starts[i] }}&end={{ ends[i] }}" frameborder="0" allow="accelerometer; autoplay; encrypted-media; gyroscope; picture-in- picture" allowfullscreen></iframe> <p>Start: {{ starts[i] }} | End: {{ ends[i] }}</p> <p>Query: {{ query }}</p> </div> {% endfor %} </div> </body> </html> </code></pre>

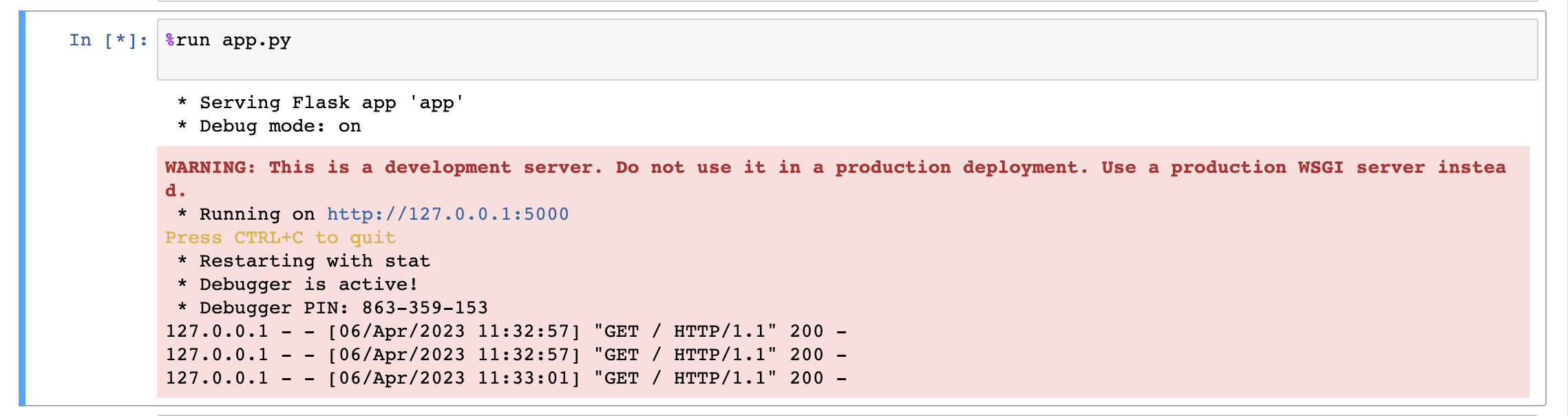

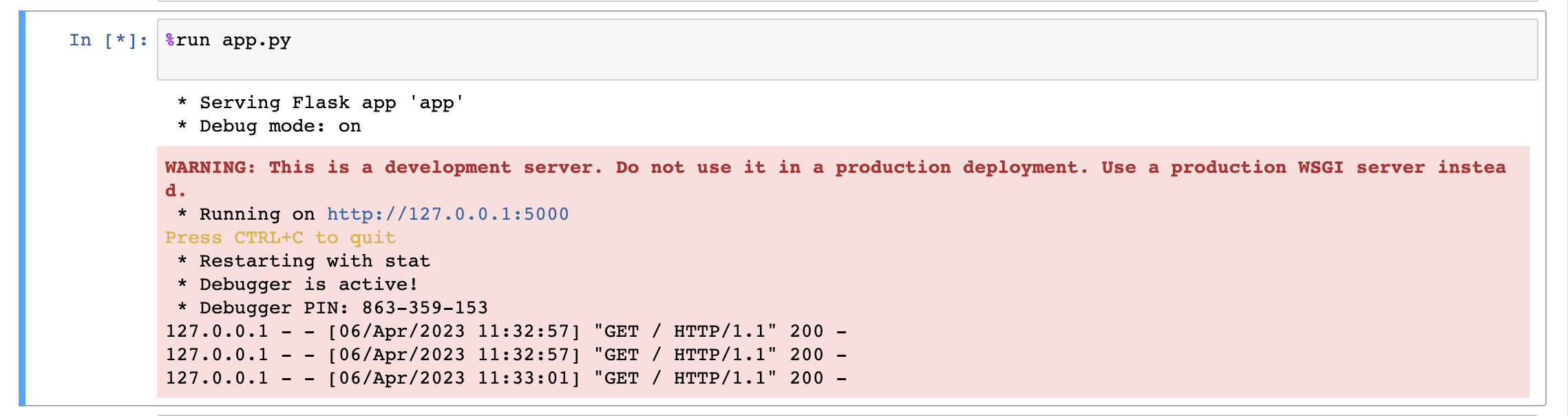

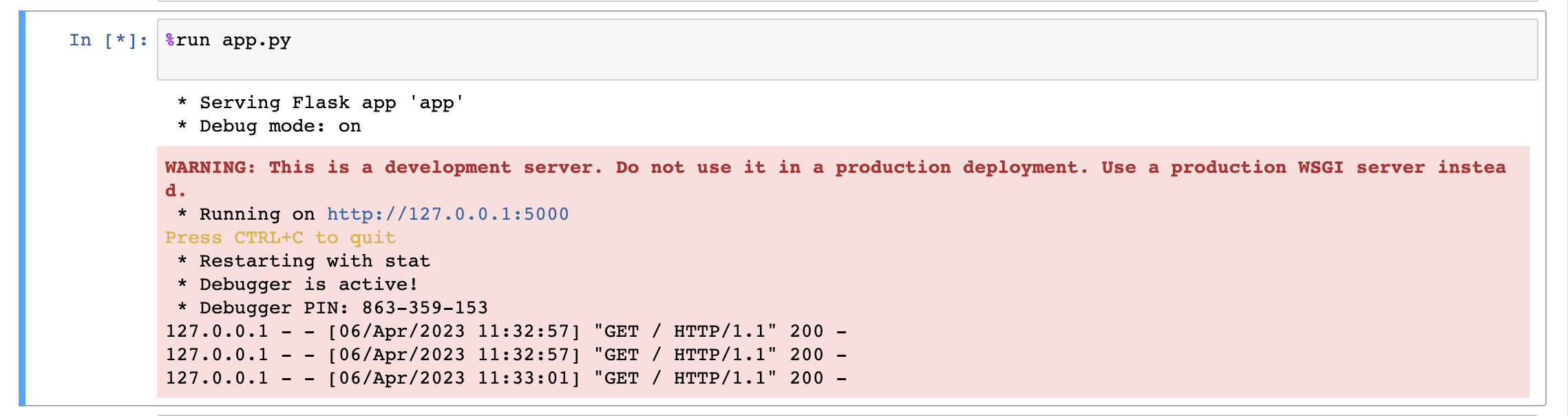

Perfect! let’s just run the last cell of our Jupyter notebook:

<pre><code class="python">%run app.py </code></pre>

You should see an output similar to the one below, which indicates that everything is going according to our expectations😊:

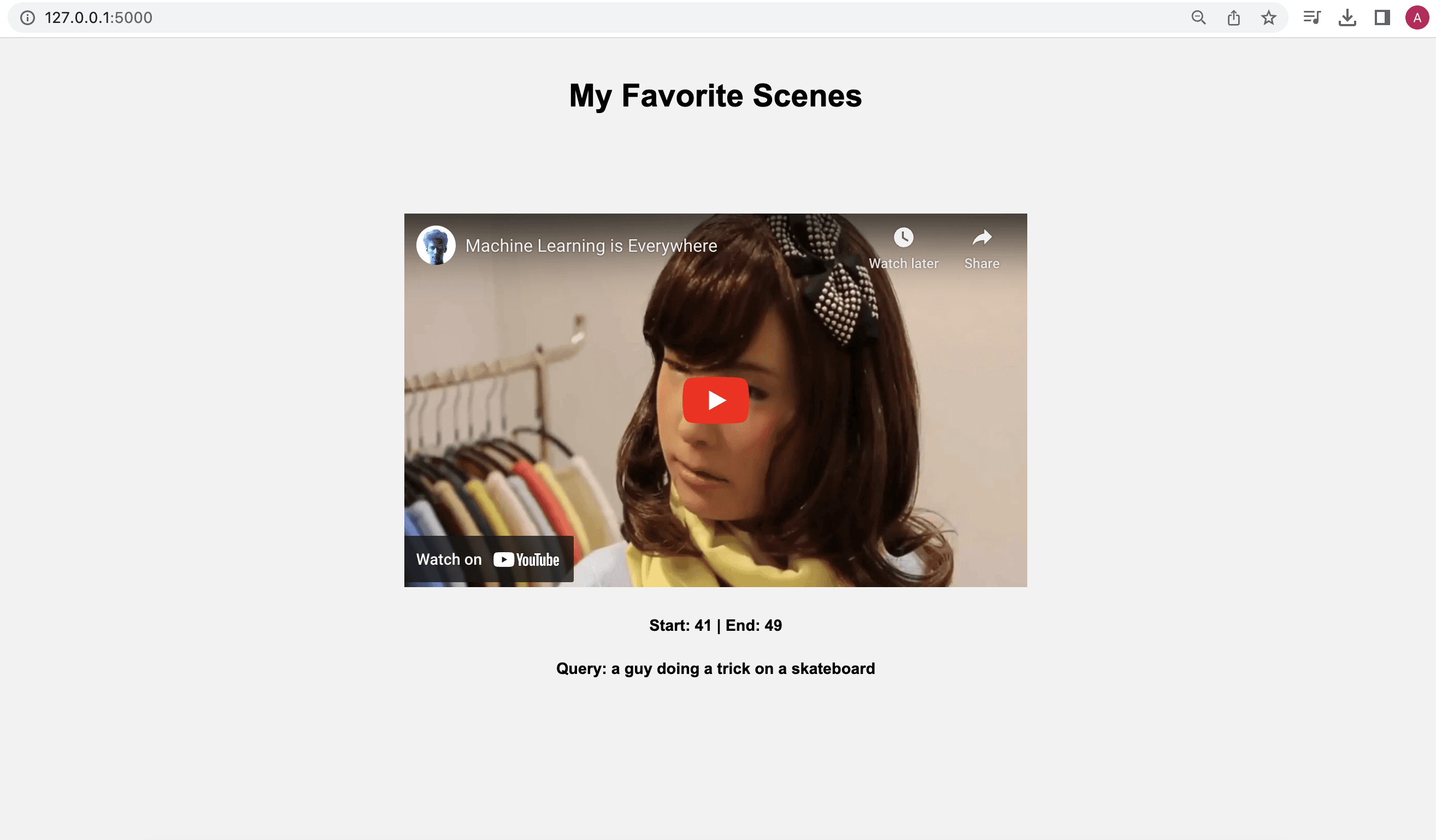

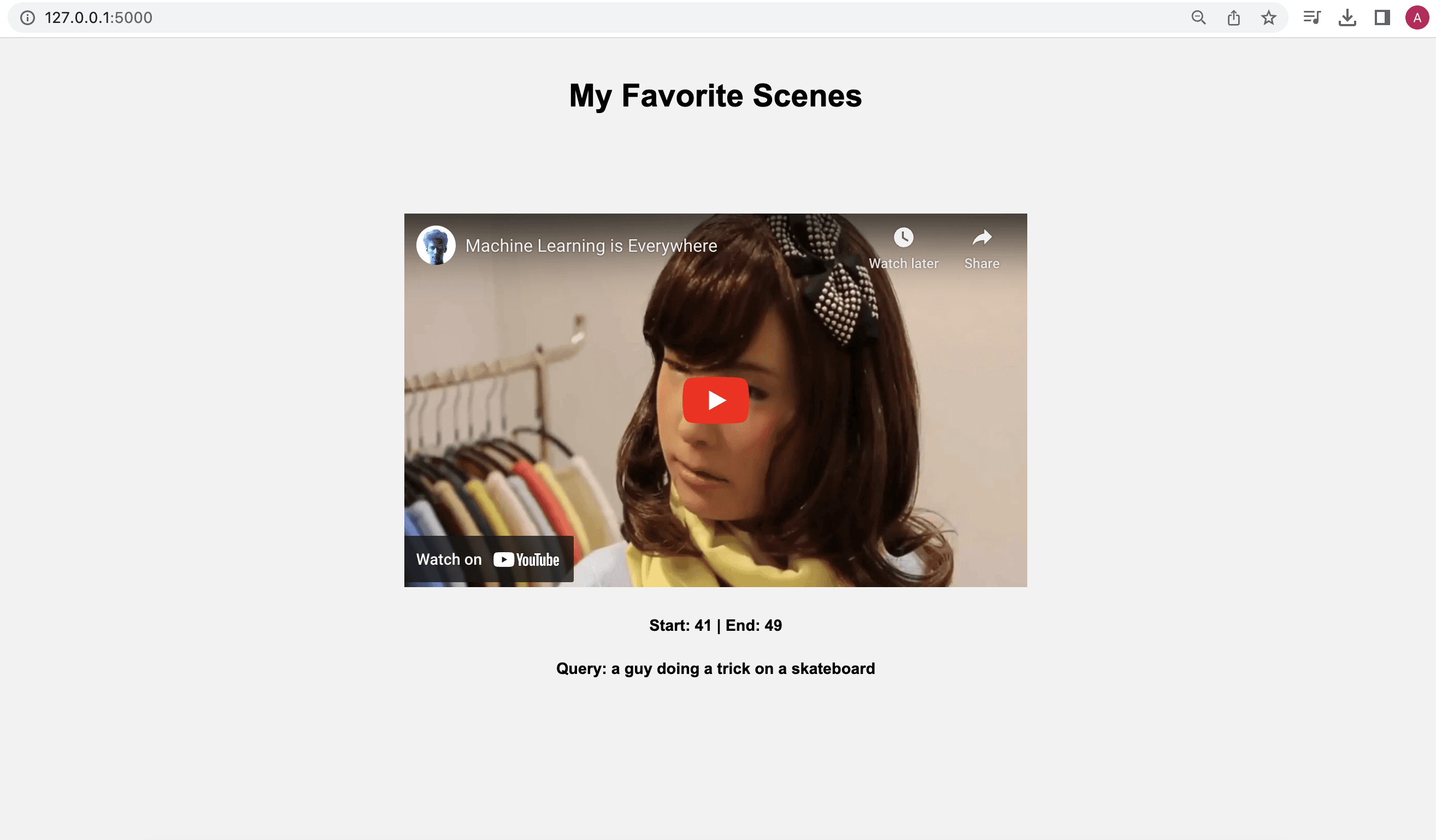

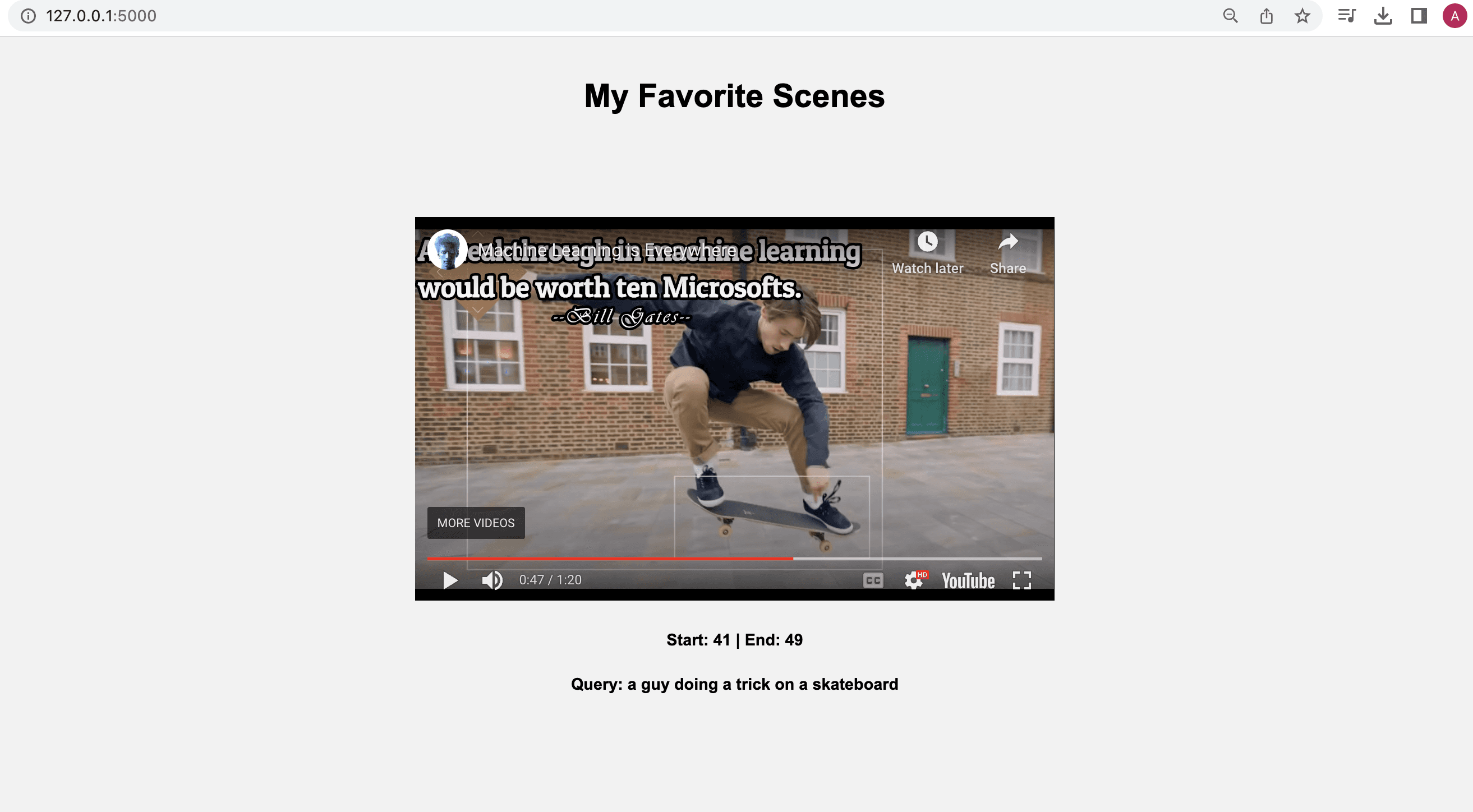

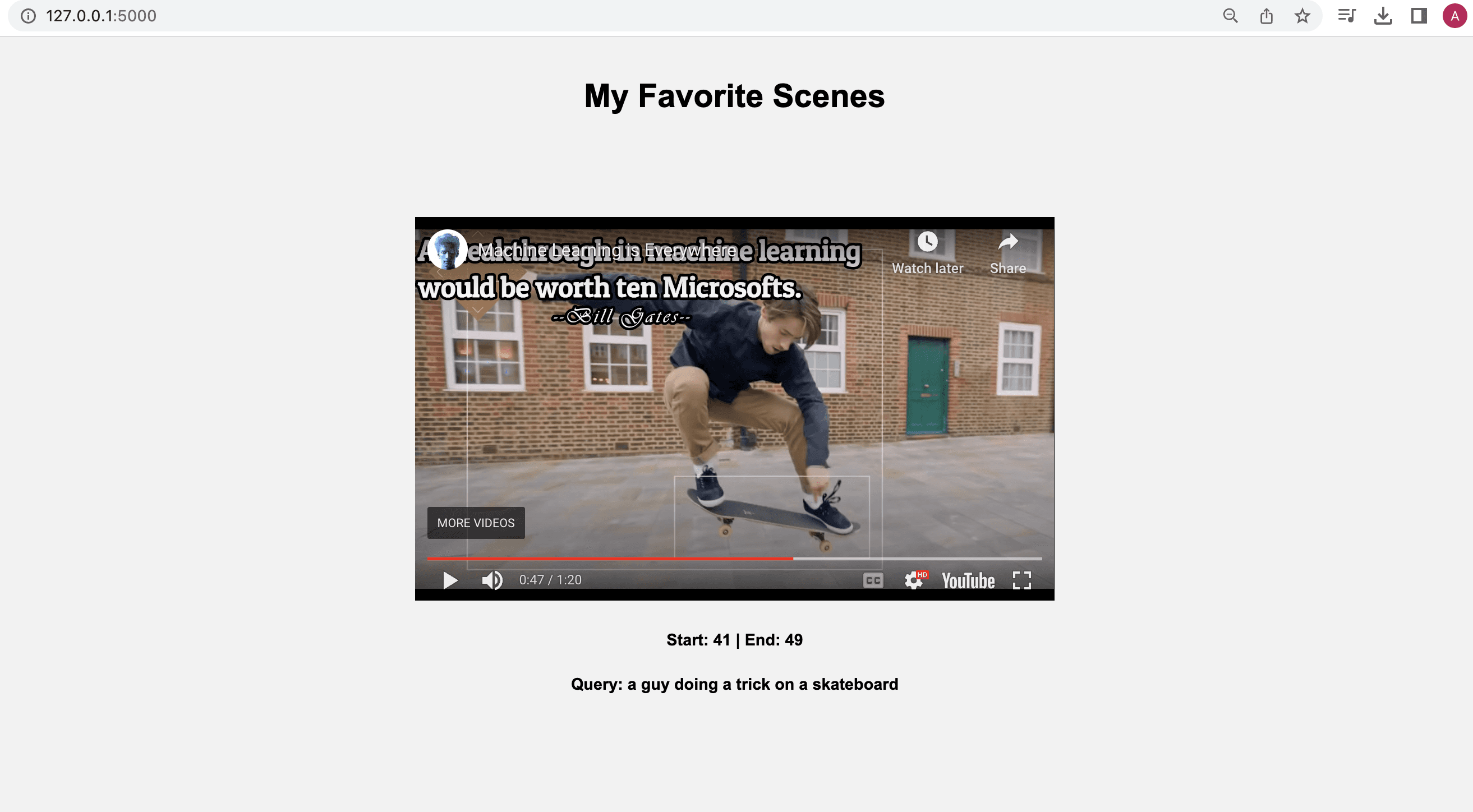

Once you click on the URL link http://127.0.0.1:5000, depending upon your search query, the output will be as follows:

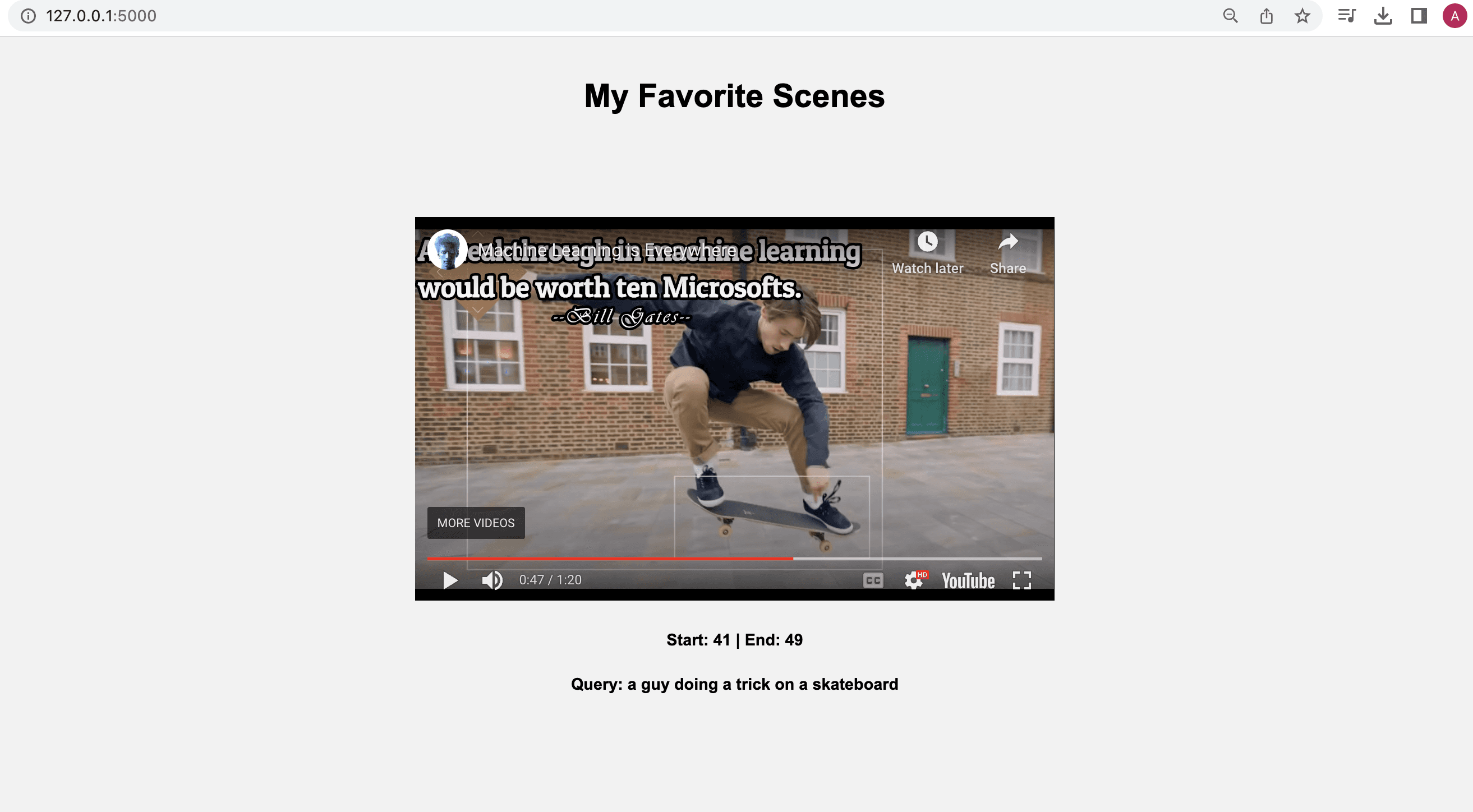

When you play the video, it will adhere to the timestamps we provided, highlighting the specific moments or segments we were interested in finding within the video:

Here's the link to the folder containing the Jupyter Notebook and all the required files necessary to run the tutorial locally on your own computer - https://tinyurl.com/twelvelabs

Fun activities for you to explore:

Experiment with different combinations of search options - visual, conversation, and text-in-video, and see how the results vary.

Upload multiple videos and tweak the code accordingly to search across all those videos simultaneously.

Show your pro developer skills and enhance the code by allowing users to input a query through the index.html page and fetch the results in real-time.

What's next

In the next post, we'll dive into combining multiple simple queries using a set of operators and searching across a collection of videos with them. Stay tuned for the coming posts!

Oh, and one last thing: don't forget to join our Discord community to connect with fellow multimodal minds who share your interest in multimodal foundation models. It's a great place to exchange ideas, ask questions, and learn from one another!

Recently, Twelve Labs was featured as a pioneer in multimodal AI at GTC 2023. While watching the GTC 2023 video, I observed our co-founder, Soyoung, pulling her hair out finding the segment where our company was featured. This experience motivated me to tackle the challenge of searching within videos using our very own APIs. So, here we are with my fun-filled weekend project's tutorial, where I'll guide you through the process of finding specific moments in your videos using the Twelve Labs' search API.

A simple blueprint to make searching within videos better😎

Introduction

Twelve Labs provides a multimodal foundation model in the form of a suite of APIs, designed to assist you in creating applications that leverage the power of video understanding. In this blog post, we'll explore how you can use the Twelve Labs API to seamlessly find specific moments of interest in your video with natural language queries. I'll upload an entertaining video from my local drive, which I put together during my graduate school days, titled "Machine Learning is Everywhere." True to its name, this 80-second video illustrates the ubiquity of ML in all aspects of life. The video highlights ML applications, such as a professional ping pong player competing against a Kuka robot, a guy performing a skateboard trick with ML being used for summarizing the event, and more. With the help of Twelve Labs API, I'll demo how you could find a specific scene within a video using simple natural language queries.

In this tutorial, I aim to provide a gentle introduction to the simple search API, so I've kept it minimalistic by focusing on searching for moments within a single video and creating a demo app using the lightweight and user-friendly Flask framework. However, the platform is more than capable of scaling to accommodate uploading hundreds or even thousands of videos and finding specific moments within them. Let's dive in and gear up for some serious fun!

Quick overview

Prerequisites: Sign up to use Twelve Labs API suite and install the necessary packages to create the demo application

Video Upload: Got some awesome videos? Send them over to the Twelve Labs platform, and watch how it efficiently indexes them, making your search experience a breeze!

Semantic Video Search: Hunt for those memorable moments in your videos with simple natural language queries

Crafting a Demo App: Craft a nifty python script that uses Flask to render your HTML template and then design a sleek HTML page to display your search results

💡 By the way, if you're reading this post and you're not a developer, fear not! I've included a link to a ready-made Jupyter Notebook, allowing you to run the entire process and obtain the results. Additionally, check out our Playground to experience the power of semantic video search without writing a single line of code. Reach out to me if you need free credits😄.

Prerequisites

In this tutorial, we'll be using a Jupyter Notebook. I'm assuming you've already set up Jupyter, Python, and Pip on your local computer. If you run into any issues, please come and holla at me for help on our Discord server, where we have quickest response times 🚅🏎️⚡️. If Discord isn't your thing, you can also reach out to me via email. After creating a Twelve Labs account, you can access the API Dashboard and obtain your API key. This demo will use an existing account. To make API calls, simply use your secret key and specify the API URL. Additionally, you can use environment variables to pass configuration details to your application:

<pre><code class="bash">%env API_KEY=<your_API_key> %env API_URL=https://api.twelvelabs.io/v1.1 </code></pre>

Installing the dependencies:

<pre><code class="python">pip install requests pip install flask </code></pre>

Video upload

In our first step, I will show you how I uploaded a video from my local computer to the Twelve Labs platform to leverage its video understanding capabilities.

Imports:

<pre><code class="python">import os import requests import glob from pprint import pprint </code></pre

Retrieve the URL of the API and my API key as follows:

<pre><code class="python">API_URL = os.getenv("API_URL") assert API_URL </code></pre

<pre><code class="python">API_KEY = os.getenv("API_KEY") assert API_KEY </code></pre

Index API

The next step involves using the Index API to create a video index. A video index is a way to group one or more videos together and set some common search properties, thereby allowing you to perform semantic searches on the videos uploaded to the index.

An index is defined by the following fields:

<ul> <li>A <b>name</b></li> <li>An <b>engine</b> - currently, we offer Marengo2, our latest multimodal foundation model for video understanding</li> <li>One or more <b>indexing options</b>:</li> <ul> <li><b>visual</b>: If this option is selected, the API service performs a multimodal audio-visual analysis of your videos and allows you to search by objects, actions, sounds, movements, places, situational events, and complex audio-visual text descriptions. Some examples of visual searches could include a crowd cheering or tired developers leaving an office😆.</li> <li><b>conversation</b>: When this option is chosen, the API extracts a description from the video (transcript) and carries out a semantic NLP analysis on the transcript. This enables you to pinpoint the precise moments in your video where the conversation you're searching for takes place. An example of searching within the conversation that takes place in the indexed videos could be the moment you lied to your sibling😜.</li> <li><b>text_in_video</b>: When this option is selected, the API service carries out text recognition (OCR) allowing you to search for text that appears in your videos, such as signs, labels, subtitles, logos, presentations, and documents. In this case, you might search for brands that appear during a football match🏈.</li></ul></ul>

Creating an index:

<pre><code class="python"># Construct the URL of the `/indexes` endpoint INDEXES_URL = f"{API_URL}/indexes" # Specify the name of the index INDEX_NAME = "My University Days" # Set the header of the request headers = { "x-api-key": API_KEY } # Declare a dictionary named data data = { "engine_id": "marengo2", "index_options": ["visual", "conversation", "text_in_video"], "index_name": INDEX_NAME, } # Create an index response = requests.post(INDEXES_URL, headers=headers, json=data) # Store the unique identifier of your index INDEX_ID = response.json().get('_id') # Print the status code and response print(f'Status code: {response.status_code}') pprint(response.json()) </code></pre

Task API to upload a video

The Twelve Labs platform offers a Task API to upload videos into the created index and monitor the status of the upload process:

<pre><code class="python">TASKS_URL = f"{API_URL}/tasks" file_name = "Machine Learning is Everywhere" # indexed video will have this file name file_path = "ml.mp4" # file name of the video being uploaded file_stream = open(file_path,"rb") data = { "index_id": INDEX_ID, "language": "en" } file_param = [ ("video_file", (file_name, file_stream, "application/octet-stream")),] response = requests.post(TASKS_URL, headers=headers, data=data, files=file_param </code></pre

Once you upload a video, the system automatically initiates video indexing process. Twelve Labs discuss the concept of "video indexing" in relation to using a multimodal foundation model to incorporate temporal context and extract information such as movements, objects, sounds, text on screen, and speech from your videos, generating powerful video embeddings. This subsequently allow you to find specific moments within your videos using everyday language or to categorize video segments based on provided labels and prompts.

Monitoring the video indexing process:

<pre><code class="python">import time # Define starting time start = time.time() print("Start uploading video") # Monitor the indexing process TASK_STATUS_URL = f"{API_URL}/tasks/{TASK_ID}" while True: response = requests.get(TASK_STATUS_URL, headers=headers) STATUS = response.json().get("status") if STATUS == "ready": print(f"Status code: {STATUS}") break time.sleep(10) # Define ending time end = time.time() print("Finish uploading video") print("Time elapsed (in seconds): ", end - start) </code></pre

<pre><code class="python"># Retrieve the unique identifier of the video VIDEO_ID = response.json().get('video_id') # Print the status code, the unique identifier of the video, and the response print(f"VIDEO ID: {VIDEO_ID}") pprint(response.json()) </code></pre

<pre><code class="language-plaintext">VIDEO ID: 642621ffffa3551fb6d2f### {'_id': '642621fc3205dc8a48ba8###', 'created_at': '2023-03-30T23:57:48.877Z', 'estimated_time': '2023-03-30T23:59:58.312Z', 'index_id': '###621fb7b1f2230dfcd6###', 'metadata': {'duration': 80.32, 'filename': 'Machine Learning is Everywhere', 'height': 720, 'width': 1280}, 'status': 'ready', 'type': 'index_task_info', 'updated_at': '2023-03-31T00:00:34.412Z', 'video_id': '642621ffffa3551fb6d2f###'} </code></pre

Creating another environment variables to pass the unique identifier of the existing index for our app:

<pre><code class="python">%env ENV_INDEX_ID = ###621fb7b1f2230dfcd6### </code></pre

Here's a list of all the videos in the index. For now, we've only indexed one video to keep things simple, but you can upload up to 10 hours of video content using our free credits:

<pre><code class="python"># Retrieve the unique identifier of the existing index INDEX_ID = os.getenv("ENV_INDEX_ID") # Set the header of the request headers = { "x-api-key": API_KEY, } # List all the videos in an index INDEXES_VIDEOS_URL = f"{API_URL}/indexes/{INDEX_ID}/videos" response = requests.get(INDEXES_VIDEOS_URL, headers=headers) print(f'Status code: {response.status_code}') pprint(response.json()) </code></pre

<pre><code class="python">Status code: 200 {'data': [{'_id': '642621ffffa3551fb6d2f###', 'created_at': '2023-03-30T23:57:48Z', 'metadata': {'duration': 80.32, 'engine_id': 'marengo2', 'filename': 'Machine Learning is Everywhere', 'fps': 25, 'height': 720, 'size': 11877525, 'width': 1280}, 'updated_at': '2023-03-30T23:57:51Z'}], 'page_info': {'limit_per_page': 10, 'page': 1, 'total_duration': 80.32, 'total_page': 1, 'total_results': 1}} </code></pre

Semantic video search, aka finding specific moments

Once the system completes indexing the video and generating video embeddings, you can leverage them to find specific moments using the search API. This API identifies the exact start-end time codes in relevant videos that correspond to the semantic meaning of the query you enter. Depending on the indexing options you selected, you'll have a subset of the same options to choose from for your semantic video search. For instance, if you enabled all the options for the index, you'll have the ability to search: audio-visually; for conversations; and for any text appearing within the videos. The reason for providing the same set of options at both the index and search levels is to offer you the flexibility to decide how you'd like to utilize the platform for analyzing your video content and how you'd want to search across your video content using a combination of options you find suitable for your current context.

Let’s start with a visual search using a simple natural language query, “a guy doing a trick on a skateboard”:

<pre><code class="bash">Status code: 200 {'data': [{'confidence': 'high', 'end': 49.34375, 'metadata': [{'type': 'visual'}], 'score': 83.24, 'start': 41.65625, 'video_id': '642621ffffa3551fb6d2f###'}], 'page_info': {'limit_per_page': 10, 'page_expired_at': '2023-03-31T22:41:42Z', 'total_results': 1}, 'search_pool': {'index_id': '642621fb7b1f2230dfcd####', 'total_count': 1, 'total_duration': 80}} </code></pre

Corresponding video segment:

This part gets me super pumped because it showcases the model's human-like understanding of the video content. As you can see in the above screenshot, the system nails it by pinpointing the exact moment I wanted to extract.

Let's give another query a shot, "a guy playing table tennis with a robotic arm" and witness the system work its magic once more:

<pre><code class="python"># Construct the URL of the `/search` endpoint SEARCH_URL = f"{API_URL}/search/" # Set the header of the request headers = { "x-api-key": API_KEY } query = "a guy playing table tennis with a robotic arm" # Declare a dictionary named `data` data = { "query": query, # Specify your search query "index_id": INDEX_ID, # Indicate the unique identifier of your index "search_options": ["visual"], # Specify the search options } # Make a search request response = requests.post(SEARCH_URL, headers=headers, json=data) print(f"Status code: {response.status_code}") pprint(response.json()) </code></pre

Output:

<pre><code class="python">{'data': [{'confidence': 'high', 'end': 14.6875, 'metadata': [{'type': 'visual'}], 'score': 90.62, 'start': 9.75, 'video_id': '642621ffffa3551fb6d2####'}, {'confidence': 'high', 'end': 9.75, 'metadata': [{'type': 'visual'}], 'score': 89.74, 'start': 4.5, 'video_id': '642621ffffa3551fb6d2####'}], </code></pre

Corresponding video segments:

Bingo! Once again, the system pinpointed those fascinating moments spot-on.

💡Here's a fun little task for you: search for "a breakthrough in machine learning would be worth ten Microsofts" and set the search option to: ["text_in_video"].

In order to make the most of these JSON responses without manually checking the start and end points, we need a stunning index page crafted with love. That way, we can send the search request's JSON output straight to the corresponding video. Let's get to it!

Crafting a demo app

Kudos for sticking with me on this awesome video understanding adventure 🎉🥳👏! We've reached the final step where we'll craft a Flask-based, straightforward app that takes the search results from our previous steps and presents them on a beautiful web page, showcasing the exact moments we requested. By the way, I chose Flask since I come from a data science background and I love Python. Moreover, Flask is a lightweight Python-based framework that aligns with my needs for this tutorial. However, you're welcome to select any framework that caters to your preferences and requirements.

First step is to have the necessary imports on our Jupyter Notebook:

<pre><code class="python">import json import pickle </code></pre

We'll be generating two lists - "starts" and "ends" - that hold all the starting and ending timestamps gathered from the search API:

<pre><code class="python">data = response.json() starts = [] ends = [] for item in data['data']: starts.append(item['start']) ends.append(item['end']) print("starts:", starts) print("ends:", ends) </code></pre

<pre><code class="bash">starts: [34.90625, 0, 62.5625] ends: [39.21875, 4.5, 65.90625] </code></pre

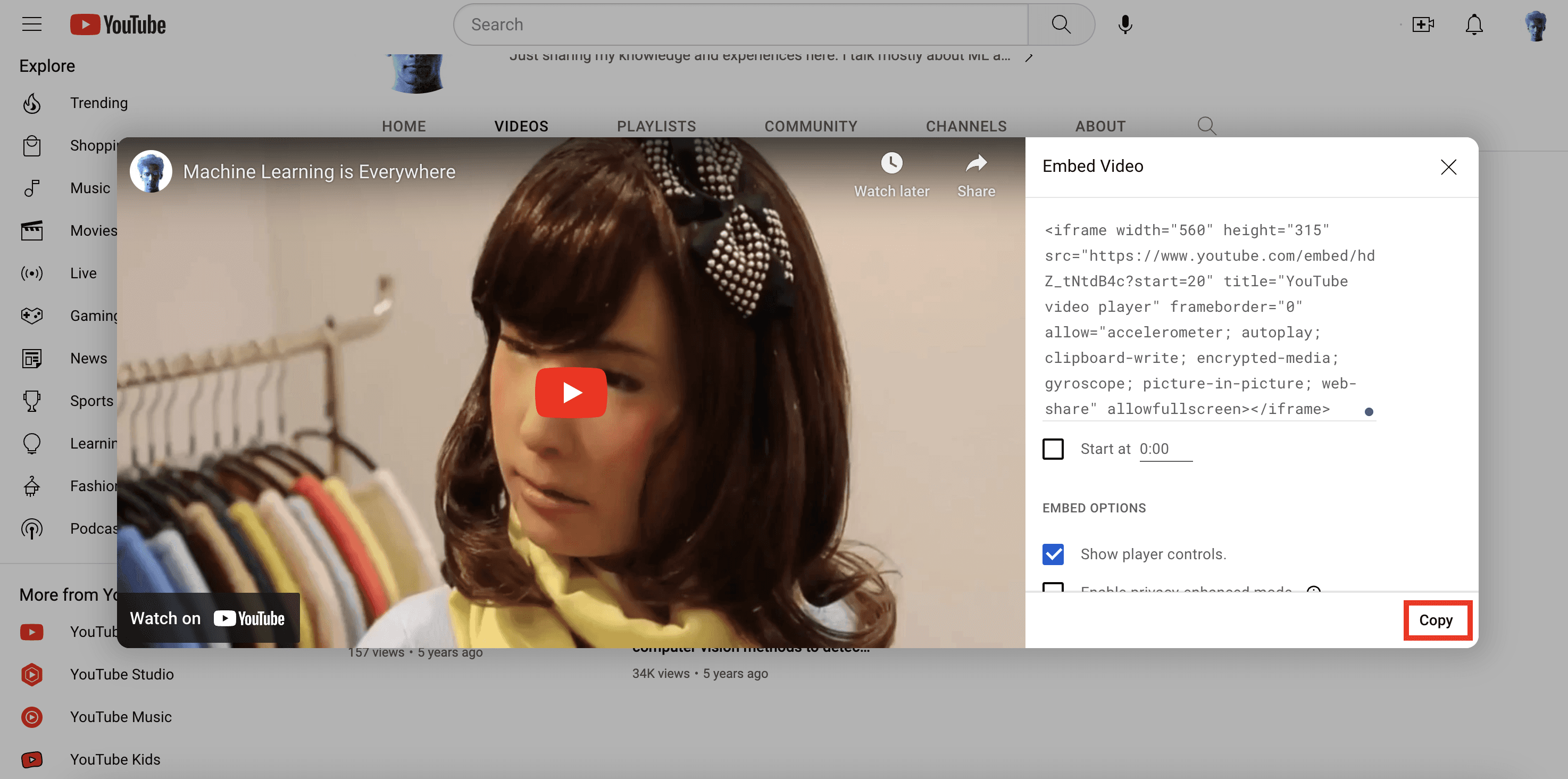

Now that I've got the required timestamps and the same videos uploaded on my YouTube channel, there are a couple of ways to use them. I could either grab the video from my local disk and display my favorite clips on the web page, or I could simply use the video URL from my YouTube channel to achieve the same result. I find the latter more appealing, so I'll use the YouTube embed code for the same video I uploaded and pass the start and end timestamps to it. This way, the exact video segments I searched for will be displayed. Just a minor heads up – the YouTube embed code only supports integer values for the start and end parameters, so we'll need to round these values:

<pre><code class="bash">starts_int = [int(f) for f in starts] ends_int = [int(f) for f in ends] </code></pre

Let's quickly pickle these lists along with the query we entered. This will be useful when we pass them to the Flask app file we're preparing to create:

<pre><code class="python">with open("lists.pkl", "wb") as f: pickle.dump((starts_int, ends_int, query), f) </code></pre>

Voilà! We're all set to work with Flask and pass these parameters.

Steps to create Flask app

1. Create a new Flask project: create a new directory for the project and create a new Python file that will serve as the main file for your Flask app.

<pre><code class="bash">mkdir my_flask_app cd my_flask_app touch app.py </code></pre>

Once we have the Flask app file and the template ready, the directory structure will look like this:

<pre><code class="markdown">my_flask_app/ │ app.py │ ml.mp4 │ └───templates/ │ index.html </code></pre>

Keep the video file you will upload within the my_flask_app directory.

2. Write the Flask app code: In the app.py file, we need to write the code for our Flask app. Here is the Flask app that uses Jinja2 templates and renders the 'index.html' file where our lists of timestamps are being utilized:

<pre><code class="python">from flask import Flask, render_template import pickle with open("lists.pkl", "rb") as f: starts, ends, query = pickle.load(f) app = Flask(__name__) @app.route("/") def index(): return render_template("index.html", starts=starts, ends=ends, query=query) if __name__ == "__main__": app.run(debug=True) </code></pre

3. Create a templates directory: To use Jinja2 templates with Flask, we need to create a templates directory in the same directory as our Flask app. In this directory, we will store your Jinja2 templates:

<pre><code class="bash">mkdir templates </code></pre>

The final piece of the puzzle is the index.html page that will display all the video segments that matched the search query. Before we work on the HTML file, let’s quickly grab the Embed Video code from my YouTube channel:

4. Create a Jinja2 template: To create a Jinja2 template, we need to create an HTML file in the templates directory:

<pre><code class="bash">touch index.html </code></pre>

Here is a simple example of a Jinja2 template. It incorporate the code within the HTML file that lets us iterate through the lists and the query string we passed from the app file:

<pre><code class="language-html"><html> <head> <style> body { background-color: #F2F2F2; font-family: Arial, sans-serif; text-align: center; } h1 { margin-top: 40px; } .video-container { display: flex; flex-wrap: wrap; padding: 40px; justify-content: center; } .video-item { display: flex; flex-direction: column; align-items: center; width: 50%; height: 600px; margin: 20px; text-align: center; } .video-item iframe { width: 80%; height: 380px; margin: 20px; } .video-item p { font-size: 16px; margin-top: 10px; font-weight: bold; } </style> </head> <body> <h1>My Favorite Scenes</h1> <div class="video-container"> {% for i in range(starts|length) %} <div class="video-item"> <iframe width="560" height="315" src="https://www.youtube.com/embed/hdZ_tNtdB4c?start={{ starts[i] }}&end={{ ends[i] }}" frameborder="0" allow="accelerometer; autoplay; encrypted-media; gyroscope; picture-in- picture" allowfullscreen></iframe> <p>Start: {{ starts[i] }} | End: {{ ends[i] }}</p> <p>Query: {{ query }}</p> </div> {% endfor %} </div> </body> </html> </code></pre>

Perfect! let’s just run the last cell of our Jupyter notebook:

<pre><code class="python">%run app.py </code></pre>

You should see an output similar to the one below, which indicates that everything is going according to our expectations😊:

Once you click on the URL link http://127.0.0.1:5000, depending upon your search query, the output will be as follows:

When you play the video, it will adhere to the timestamps we provided, highlighting the specific moments or segments we were interested in finding within the video:

Here's the link to the folder containing the Jupyter Notebook and all the required files necessary to run the tutorial locally on your own computer - https://tinyurl.com/twelvelabs

Fun activities for you to explore:

Experiment with different combinations of search options - visual, conversation, and text-in-video, and see how the results vary.

Upload multiple videos and tweak the code accordingly to search across all those videos simultaneously.

Show your pro developer skills and enhance the code by allowing users to input a query through the index.html page and fetch the results in real-time.

What's next

In the next post, we'll dive into combining multiple simple queries using a set of operators and searching across a collection of videos with them. Stay tuned for the coming posts!

Oh, and one last thing: don't forget to join our Discord community to connect with fellow multimodal minds who share your interest in multimodal foundation models. It's a great place to exchange ideas, ask questions, and learn from one another!

Recently, Twelve Labs was featured as a pioneer in multimodal AI at GTC 2023. While watching the GTC 2023 video, I observed our co-founder, Soyoung, pulling her hair out finding the segment where our company was featured. This experience motivated me to tackle the challenge of searching within videos using our very own APIs. So, here we are with my fun-filled weekend project's tutorial, where I'll guide you through the process of finding specific moments in your videos using the Twelve Labs' search API.

A simple blueprint to make searching within videos better😎

Introduction

Twelve Labs provides a multimodal foundation model in the form of a suite of APIs, designed to assist you in creating applications that leverage the power of video understanding. In this blog post, we'll explore how you can use the Twelve Labs API to seamlessly find specific moments of interest in your video with natural language queries. I'll upload an entertaining video from my local drive, which I put together during my graduate school days, titled "Machine Learning is Everywhere." True to its name, this 80-second video illustrates the ubiquity of ML in all aspects of life. The video highlights ML applications, such as a professional ping pong player competing against a Kuka robot, a guy performing a skateboard trick with ML being used for summarizing the event, and more. With the help of Twelve Labs API, I'll demo how you could find a specific scene within a video using simple natural language queries.

In this tutorial, I aim to provide a gentle introduction to the simple search API, so I've kept it minimalistic by focusing on searching for moments within a single video and creating a demo app using the lightweight and user-friendly Flask framework. However, the platform is more than capable of scaling to accommodate uploading hundreds or even thousands of videos and finding specific moments within them. Let's dive in and gear up for some serious fun!

Quick overview

Prerequisites: Sign up to use Twelve Labs API suite and install the necessary packages to create the demo application

Video Upload: Got some awesome videos? Send them over to the Twelve Labs platform, and watch how it efficiently indexes them, making your search experience a breeze!

Semantic Video Search: Hunt for those memorable moments in your videos with simple natural language queries

Crafting a Demo App: Craft a nifty python script that uses Flask to render your HTML template and then design a sleek HTML page to display your search results

💡 By the way, if you're reading this post and you're not a developer, fear not! I've included a link to a ready-made Jupyter Notebook, allowing you to run the entire process and obtain the results. Additionally, check out our Playground to experience the power of semantic video search without writing a single line of code. Reach out to me if you need free credits😄.

Prerequisites

In this tutorial, we'll be using a Jupyter Notebook. I'm assuming you've already set up Jupyter, Python, and Pip on your local computer. If you run into any issues, please come and holla at me for help on our Discord server, where we have quickest response times 🚅🏎️⚡️. If Discord isn't your thing, you can also reach out to me via email. After creating a Twelve Labs account, you can access the API Dashboard and obtain your API key. This demo will use an existing account. To make API calls, simply use your secret key and specify the API URL. Additionally, you can use environment variables to pass configuration details to your application:

<pre><code class="bash">%env API_KEY=<your_API_key> %env API_URL=https://api.twelvelabs.io/v1.1 </code></pre>

Installing the dependencies:

<pre><code class="python">pip install requests pip install flask </code></pre>

Video upload

In our first step, I will show you how I uploaded a video from my local computer to the Twelve Labs platform to leverage its video understanding capabilities.

Imports:

<pre><code class="python">import os import requests import glob from pprint import pprint </code></pre

Retrieve the URL of the API and my API key as follows:

<pre><code class="python">API_URL = os.getenv("API_URL") assert API_URL </code></pre

<pre><code class="python">API_KEY = os.getenv("API_KEY") assert API_KEY </code></pre

Index API

The next step involves using the Index API to create a video index. A video index is a way to group one or more videos together and set some common search properties, thereby allowing you to perform semantic searches on the videos uploaded to the index.

An index is defined by the following fields:

<ul> <li>A <b>name</b></li> <li>An <b>engine</b> - currently, we offer Marengo2, our latest multimodal foundation model for video understanding</li> <li>One or more <b>indexing options</b>:</li> <ul> <li><b>visual</b>: If this option is selected, the API service performs a multimodal audio-visual analysis of your videos and allows you to search by objects, actions, sounds, movements, places, situational events, and complex audio-visual text descriptions. Some examples of visual searches could include a crowd cheering or tired developers leaving an office😆.</li> <li><b>conversation</b>: When this option is chosen, the API extracts a description from the video (transcript) and carries out a semantic NLP analysis on the transcript. This enables you to pinpoint the precise moments in your video where the conversation you're searching for takes place. An example of searching within the conversation that takes place in the indexed videos could be the moment you lied to your sibling😜.</li> <li><b>text_in_video</b>: When this option is selected, the API service carries out text recognition (OCR) allowing you to search for text that appears in your videos, such as signs, labels, subtitles, logos, presentations, and documents. In this case, you might search for brands that appear during a football match🏈.</li></ul></ul>

Creating an index:

<pre><code class="python"># Construct the URL of the `/indexes` endpoint INDEXES_URL = f"{API_URL}/indexes" # Specify the name of the index INDEX_NAME = "My University Days" # Set the header of the request headers = { "x-api-key": API_KEY } # Declare a dictionary named data data = { "engine_id": "marengo2", "index_options": ["visual", "conversation", "text_in_video"], "index_name": INDEX_NAME, } # Create an index response = requests.post(INDEXES_URL, headers=headers, json=data) # Store the unique identifier of your index INDEX_ID = response.json().get('_id') # Print the status code and response print(f'Status code: {response.status_code}') pprint(response.json()) </code></pre

Task API to upload a video

The Twelve Labs platform offers a Task API to upload videos into the created index and monitor the status of the upload process:

<pre><code class="python">TASKS_URL = f"{API_URL}/tasks" file_name = "Machine Learning is Everywhere" # indexed video will have this file name file_path = "ml.mp4" # file name of the video being uploaded file_stream = open(file_path,"rb") data = { "index_id": INDEX_ID, "language": "en" } file_param = [ ("video_file", (file_name, file_stream, "application/octet-stream")),] response = requests.post(TASKS_URL, headers=headers, data=data, files=file_param </code></pre

Once you upload a video, the system automatically initiates video indexing process. Twelve Labs discuss the concept of "video indexing" in relation to using a multimodal foundation model to incorporate temporal context and extract information such as movements, objects, sounds, text on screen, and speech from your videos, generating powerful video embeddings. This subsequently allow you to find specific moments within your videos using everyday language or to categorize video segments based on provided labels and prompts.

Monitoring the video indexing process:

<pre><code class="python">import time # Define starting time start = time.time() print("Start uploading video") # Monitor the indexing process TASK_STATUS_URL = f"{API_URL}/tasks/{TASK_ID}" while True: response = requests.get(TASK_STATUS_URL, headers=headers) STATUS = response.json().get("status") if STATUS == "ready": print(f"Status code: {STATUS}") break time.sleep(10) # Define ending time end = time.time() print("Finish uploading video") print("Time elapsed (in seconds): ", end - start) </code></pre

<pre><code class="python"># Retrieve the unique identifier of the video VIDEO_ID = response.json().get('video_id') # Print the status code, the unique identifier of the video, and the response print(f"VIDEO ID: {VIDEO_ID}") pprint(response.json()) </code></pre

<pre><code class="language-plaintext">VIDEO ID: 642621ffffa3551fb6d2f### {'_id': '642621fc3205dc8a48ba8###', 'created_at': '2023-03-30T23:57:48.877Z', 'estimated_time': '2023-03-30T23:59:58.312Z', 'index_id': '###621fb7b1f2230dfcd6###', 'metadata': {'duration': 80.32, 'filename': 'Machine Learning is Everywhere', 'height': 720, 'width': 1280}, 'status': 'ready', 'type': 'index_task_info', 'updated_at': '2023-03-31T00:00:34.412Z', 'video_id': '642621ffffa3551fb6d2f###'} </code></pre

Creating another environment variables to pass the unique identifier of the existing index for our app:

<pre><code class="python">%env ENV_INDEX_ID = ###621fb7b1f2230dfcd6### </code></pre

Here's a list of all the videos in the index. For now, we've only indexed one video to keep things simple, but you can upload up to 10 hours of video content using our free credits:

<pre><code class="python"># Retrieve the unique identifier of the existing index INDEX_ID = os.getenv("ENV_INDEX_ID") # Set the header of the request headers = { "x-api-key": API_KEY, } # List all the videos in an index INDEXES_VIDEOS_URL = f"{API_URL}/indexes/{INDEX_ID}/videos" response = requests.get(INDEXES_VIDEOS_URL, headers=headers) print(f'Status code: {response.status_code}') pprint(response.json()) </code></pre

<pre><code class="python">Status code: 200 {'data': [{'_id': '642621ffffa3551fb6d2f###', 'created_at': '2023-03-30T23:57:48Z', 'metadata': {'duration': 80.32, 'engine_id': 'marengo2', 'filename': 'Machine Learning is Everywhere', 'fps': 25, 'height': 720, 'size': 11877525, 'width': 1280}, 'updated_at': '2023-03-30T23:57:51Z'}], 'page_info': {'limit_per_page': 10, 'page': 1, 'total_duration': 80.32, 'total_page': 1, 'total_results': 1}} </code></pre

Semantic video search, aka finding specific moments

Once the system completes indexing the video and generating video embeddings, you can leverage them to find specific moments using the search API. This API identifies the exact start-end time codes in relevant videos that correspond to the semantic meaning of the query you enter. Depending on the indexing options you selected, you'll have a subset of the same options to choose from for your semantic video search. For instance, if you enabled all the options for the index, you'll have the ability to search: audio-visually; for conversations; and for any text appearing within the videos. The reason for providing the same set of options at both the index and search levels is to offer you the flexibility to decide how you'd like to utilize the platform for analyzing your video content and how you'd want to search across your video content using a combination of options you find suitable for your current context.

Let’s start with a visual search using a simple natural language query, “a guy doing a trick on a skateboard”:

<pre><code class="bash">Status code: 200 {'data': [{'confidence': 'high', 'end': 49.34375, 'metadata': [{'type': 'visual'}], 'score': 83.24, 'start': 41.65625, 'video_id': '642621ffffa3551fb6d2f###'}], 'page_info': {'limit_per_page': 10, 'page_expired_at': '2023-03-31T22:41:42Z', 'total_results': 1}, 'search_pool': {'index_id': '642621fb7b1f2230dfcd####', 'total_count': 1, 'total_duration': 80}} </code></pre

Corresponding video segment:

This part gets me super pumped because it showcases the model's human-like understanding of the video content. As you can see in the above screenshot, the system nails it by pinpointing the exact moment I wanted to extract.

Let's give another query a shot, "a guy playing table tennis with a robotic arm" and witness the system work its magic once more:

<pre><code class="python"># Construct the URL of the `/search` endpoint SEARCH_URL = f"{API_URL}/search/" # Set the header of the request headers = { "x-api-key": API_KEY } query = "a guy playing table tennis with a robotic arm" # Declare a dictionary named `data` data = { "query": query, # Specify your search query "index_id": INDEX_ID, # Indicate the unique identifier of your index "search_options": ["visual"], # Specify the search options } # Make a search request response = requests.post(SEARCH_URL, headers=headers, json=data) print(f"Status code: {response.status_code}") pprint(response.json()) </code></pre

Output:

<pre><code class="python">{'data': [{'confidence': 'high', 'end': 14.6875, 'metadata': [{'type': 'visual'}], 'score': 90.62, 'start': 9.75, 'video_id': '642621ffffa3551fb6d2####'}, {'confidence': 'high', 'end': 9.75, 'metadata': [{'type': 'visual'}], 'score': 89.74, 'start': 4.5, 'video_id': '642621ffffa3551fb6d2####'}], </code></pre

Corresponding video segments:

Bingo! Once again, the system pinpointed those fascinating moments spot-on.

💡Here's a fun little task for you: search for "a breakthrough in machine learning would be worth ten Microsofts" and set the search option to: ["text_in_video"].

In order to make the most of these JSON responses without manually checking the start and end points, we need a stunning index page crafted with love. That way, we can send the search request's JSON output straight to the corresponding video. Let's get to it!

Crafting a demo app

Kudos for sticking with me on this awesome video understanding adventure 🎉🥳👏! We've reached the final step where we'll craft a Flask-based, straightforward app that takes the search results from our previous steps and presents them on a beautiful web page, showcasing the exact moments we requested. By the way, I chose Flask since I come from a data science background and I love Python. Moreover, Flask is a lightweight Python-based framework that aligns with my needs for this tutorial. However, you're welcome to select any framework that caters to your preferences and requirements.

First step is to have the necessary imports on our Jupyter Notebook:

<pre><code class="python">import json import pickle </code></pre

We'll be generating two lists - "starts" and "ends" - that hold all the starting and ending timestamps gathered from the search API:

<pre><code class="python">data = response.json() starts = [] ends = [] for item in data['data']: starts.append(item['start']) ends.append(item['end']) print("starts:", starts) print("ends:", ends) </code></pre

<pre><code class="bash">starts: [34.90625, 0, 62.5625] ends: [39.21875, 4.5, 65.90625] </code></pre

Now that I've got the required timestamps and the same videos uploaded on my YouTube channel, there are a couple of ways to use them. I could either grab the video from my local disk and display my favorite clips on the web page, or I could simply use the video URL from my YouTube channel to achieve the same result. I find the latter more appealing, so I'll use the YouTube embed code for the same video I uploaded and pass the start and end timestamps to it. This way, the exact video segments I searched for will be displayed. Just a minor heads up – the YouTube embed code only supports integer values for the start and end parameters, so we'll need to round these values:

<pre><code class="bash">starts_int = [int(f) for f in starts] ends_int = [int(f) for f in ends] </code></pre

Let's quickly pickle these lists along with the query we entered. This will be useful when we pass them to the Flask app file we're preparing to create:

<pre><code class="python">with open("lists.pkl", "wb") as f: pickle.dump((starts_int, ends_int, query), f) </code></pre>

Voilà! We're all set to work with Flask and pass these parameters.

Steps to create Flask app

1. Create a new Flask project: create a new directory for the project and create a new Python file that will serve as the main file for your Flask app.

<pre><code class="bash">mkdir my_flask_app cd my_flask_app touch app.py </code></pre>

Once we have the Flask app file and the template ready, the directory structure will look like this:

<pre><code class="markdown">my_flask_app/ │ app.py │ ml.mp4 │ └───templates/ │ index.html </code></pre>

Keep the video file you will upload within the my_flask_app directory.

2. Write the Flask app code: In the app.py file, we need to write the code for our Flask app. Here is the Flask app that uses Jinja2 templates and renders the 'index.html' file where our lists of timestamps are being utilized:

<pre><code class="python">from flask import Flask, render_template import pickle with open("lists.pkl", "rb") as f: starts, ends, query = pickle.load(f) app = Flask(__name__) @app.route("/") def index(): return render_template("index.html", starts=starts, ends=ends, query=query) if __name__ == "__main__": app.run(debug=True) </code></pre

3. Create a templates directory: To use Jinja2 templates with Flask, we need to create a templates directory in the same directory as our Flask app. In this directory, we will store your Jinja2 templates:

<pre><code class="bash">mkdir templates </code></pre>

The final piece of the puzzle is the index.html page that will display all the video segments that matched the search query. Before we work on the HTML file, let’s quickly grab the Embed Video code from my YouTube channel:

4. Create a Jinja2 template: To create a Jinja2 template, we need to create an HTML file in the templates directory:

<pre><code class="bash">touch index.html </code></pre>

Here is a simple example of a Jinja2 template. It incorporate the code within the HTML file that lets us iterate through the lists and the query string we passed from the app file:

<pre><code class="language-html"><html> <head> <style> body { background-color: #F2F2F2; font-family: Arial, sans-serif; text-align: center; } h1 { margin-top: 40px; } .video-container { display: flex; flex-wrap: wrap; padding: 40px; justify-content: center; } .video-item { display: flex; flex-direction: column; align-items: center; width: 50%; height: 600px; margin: 20px; text-align: center; } .video-item iframe { width: 80%; height: 380px; margin: 20px; } .video-item p { font-size: 16px; margin-top: 10px; font-weight: bold; } </style> </head> <body> <h1>My Favorite Scenes</h1> <div class="video-container"> {% for i in range(starts|length) %} <div class="video-item"> <iframe width="560" height="315" src="https://www.youtube.com/embed/hdZ_tNtdB4c?start={{ starts[i] }}&end={{ ends[i] }}" frameborder="0" allow="accelerometer; autoplay; encrypted-media; gyroscope; picture-in- picture" allowfullscreen></iframe> <p>Start: {{ starts[i] }} | End: {{ ends[i] }}</p> <p>Query: {{ query }}</p> </div> {% endfor %} </div> </body> </html> </code></pre>

Perfect! let’s just run the last cell of our Jupyter notebook:

<pre><code class="python">%run app.py </code></pre>

You should see an output similar to the one below, which indicates that everything is going according to our expectations😊:

Once you click on the URL link http://127.0.0.1:5000, depending upon your search query, the output will be as follows:

When you play the video, it will adhere to the timestamps we provided, highlighting the specific moments or segments we were interested in finding within the video:

Here's the link to the folder containing the Jupyter Notebook and all the required files necessary to run the tutorial locally on your own computer - https://tinyurl.com/twelvelabs

Fun activities for you to explore:

Experiment with different combinations of search options - visual, conversation, and text-in-video, and see how the results vary.

Upload multiple videos and tweak the code accordingly to search across all those videos simultaneously.

Show your pro developer skills and enhance the code by allowing users to input a query through the index.html page and fetch the results in real-time.

What's next

In the next post, we'll dive into combining multiple simple queries using a set of operators and searching across a collection of videos with them. Stay tuned for the coming posts!

Oh, and one last thing: don't forget to join our Discord community to connect with fellow multimodal minds who share your interest in multimodal foundation models. It's a great place to exchange ideas, ask questions, and learn from one another!

Related articles

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved